To bring some fun into the application for user who enter data we are thinking about adding game like achievements.

Also it could be useful to motivate users to explore the application, give feedback and similar.

Here are some ideas, feel free to add your own.

Interesting but has to be updated manually for users in every version of OpenAtlas they are part of.

Tools for anthropological analyses to use directly while working in human remains. Allowing the acquisition of basic data like age, sex, and pathologies to use in an anthropological and archaeological context.

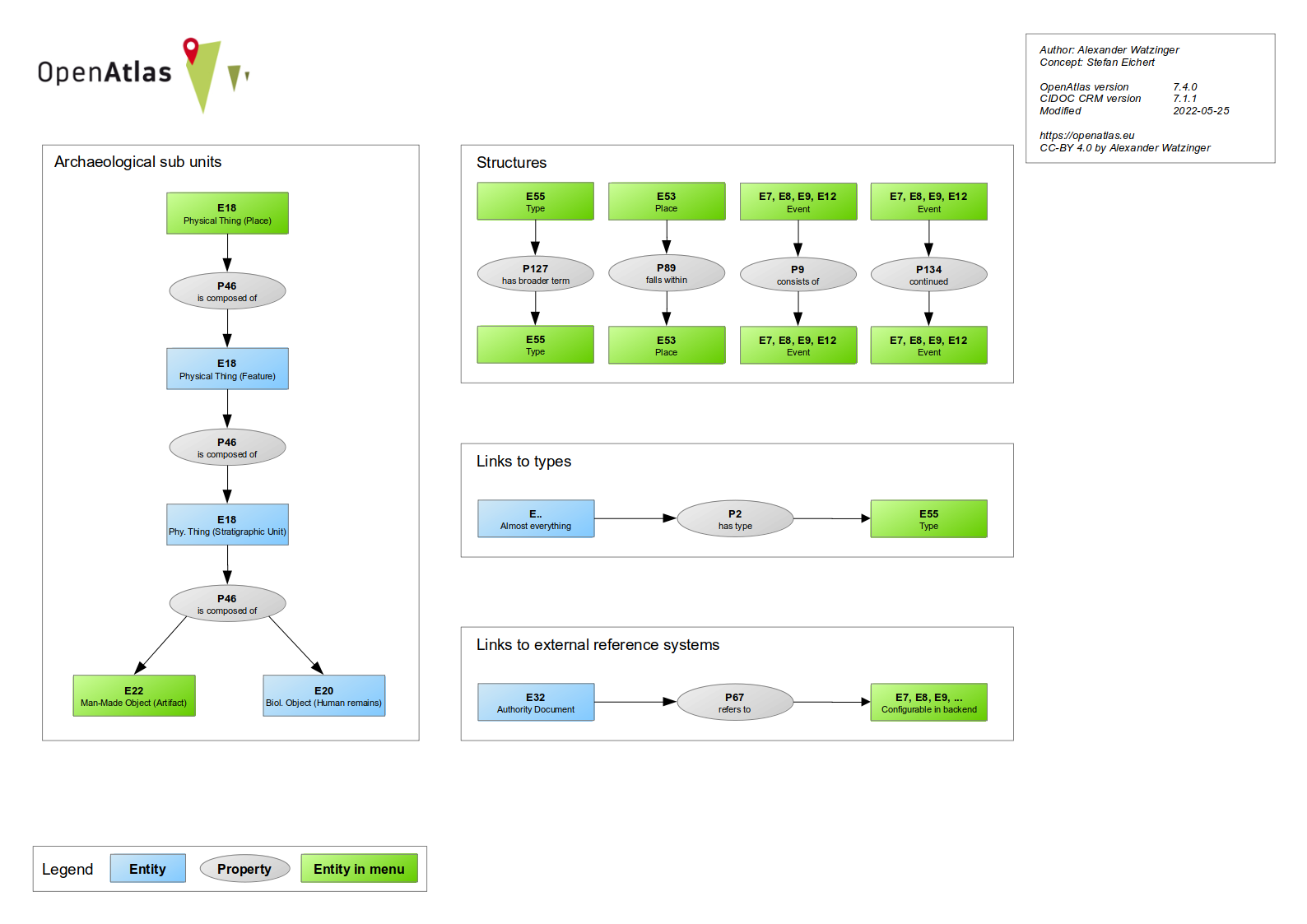

Regarding the model this is connected to burial as a stratigraphic unit (E18 physical object) and human remains (E20 biological object).

For some aspects entering data is already possible but a better user interface is desirable. For other data new ways of entering are required e.g. for sex and age estimation.

For the (graphical) anthropological interface (#1473) the bones and bone parts have to be recorded, see concept

OpenAtlas provides an API which is RESTlike to easily access data entered in OpenAtlas.

A complete overview of possible endpoints and usage is available at our swagger documentation.

Overview of available endpoints: Endpoints

The API can be accessed via the following schema: {domain}/api/{api version}/{endpoint} for example: demo.openatlas.eu/api/0.3/entity/1234

If advanced layout is selected in your profile, a link to the different formats of entities is shown on their specific page.

If the option Public is activated in site settings (default is off) the API can be used even when not logged in.

Overview of all parameters used in different versions: Parameters

Endpoints can take in multiple parameters entered after the URL e.g.: demo-openatlas.eu/api/code/actor?sort=desc&limit=300

The first parameter has to have a ? to mark the parameter sections begin. More parameters can be added with &.

api/code/actor?sort=desc&limit=300

Please visit CORS for more information.

general development

Versioning

The API basically can be accessed through two methods: Either from the user interface of an OpenAtlas application or, if the settings will allow it, from another application.

Please also refer to the SwaggerHub documentation: https://app.swaggerhub.com/apis-docs/ctot-nondef/OpenAtlas/0.2

These endpoints can provide full information about one or more entities. The output format is the Linked Place Format(LPF). Alternativly there is a simple GeoJSON format and multiple RDFs, derived from the LPF, available.

Retrieves a representations of an entity through the ID.

Retrieves a json with a list of entities based on their CIDOC CRM class code. The outpout contains a results and pagination key. All in OpenAtlas available codes can be found under OpenAtlas and CIDOC CRM. The result can be filtered, ordered and manipulated through different parameters. By default results are orderd alphabetically and 20 entities are shown.

Retrieves a json with a list of entities based on their OpenAtlas view name. Available categories can be found at OpenAtlas and CIDOC CRM. The result can be filtered, ordered and manipulated through different parameters. By default results are orderd alphabetically and 20 entities are shown.

Retrieves a json with a list of entities based on their OpenAtlas system class. Available categories can be found at OpenAtlas and CIDOC CRM. The result can be filtered, ordered and manipulated through different parameters. By default results are orderd alphabetically and 20 entities are shown.

With the query endpoint, one can combine the three endpoints above in a single query. Each request has to be a new parameter. Possible parameters are:

For more details of the different queries please consult the associated section. The result can be filtered, ordered and manipulated through different parameters. By default results are orderd alphabetically and 20 entities are shown.

Retrieves the latest entries made in the OpenAtlas database. The number represents the amount of entities retrieved. /latest can be any number between and including 1 and 100.

Retrieves a list of entities, which are linked to the entity with the given id. The result can be filtered, ordered and manipulated through different parameters. By default results are orderd alphabetically and 20 entities are shown.

Retrieves a list of entities based on their OpenAtlas type. A possible id can be obtained for example by the type_tree or node_overview endpoint. The result can be filtered, ordered and manipulated through different parameters. By default results are orderd alphabetically and 20 entities are shown.

Retrieves a list of entities based on their OpenAtlas type. This also includes all entities, which are connected to an subtype. A possible id can be obtained for example by the type_tree or node_overview endpoint. The result can be filtered, ordered and manipulated through different parameters. By default results are orderd alphabetically and 20 entities are shown.

Retrieves a JSON list of entities names, IDs and URLs, based on their OpenAtlas type. Be aware that "Historical Place" and "Administrative Units" cannot be retrieved by this.

Retrieves a JSON list of entities names, IDs and URLs, based on their OpenAtlas type. This also includes all subtypes of the given type id. Be aware that "Historical Place" and "Administrative Units" cannot be retrieved by this.

Retrieves a JSON list of entities names, IDs and URLs, from the first layer of subunits from the given entity ID.

Retrieves a JSON list of entities names, IDs and URLs, of all subunits from the given entity ID.

Retrieves a detailed JSONlist of all OpenAtlas types. This includes also includes a list of childrens of a type

Retrieves a JSON list of all OpenAtlas types sorted by custom, places, standard and value.

Provides a list of all available system classes, their CIDOC CRM mapping, which view they belong, which icon is used and the English name

Retrieves a json of the content (Intro, Legal Notice, Contact and the size for processed images) from the OpenAtlas instance. The language can be chosen with the lang parameter (en or de).

Retrieves a list of all selected geometries in the database in a standard GeoJSON format. This endpoint should be used for map overviews.

Retrieves a list of how many entities, a system class has.

Provides the image of the requested ID. Be aware, the image will only be displayed if:

Not all endpoints support all parameters. Also, some endpoints has additional unique parameter options, which are described at their section.

| path\parameter | type_id | format | page | sort | column | limit | filter | first | last | show | count | download | lang | geometry | image_size |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| entity | x | x | x | ||||||||||||

| code | x | x | x | x | x | x | x | x | x | x | x | x | |||

| system_class | x | x | x | x | x | x | x | x | x | x | x | x | |||

| entities_linked_to_entity | x | x | x | x | x | x | x | x | x | x | x | x | |||

| type_entities | x | x | x | x | x | x | x | x | x | x | x | x | |||

| type_entities_all | x | x | x | x | x | x | x | x | x | x | x | x | |||

| class | x | x | x | x | x | x | x | x | x | x | x | x | |||

| latest | x | x | x | x | x | x | x | x | x | x | x | x | |||

| query | x | x | x | x | x | x | x | x | x | x | x | x | |||

| node_entities | x | x | |||||||||||||

| node_entities_all | x | x | |||||||||||||

| subunit | x | x | |||||||||||||

| subunit_hierarchy | x | x | |||||||||||||

| type_tree | x | x | |||||||||||||

| node_overview | x | x | |||||||||||||

| geometric_entities | x | x | x | ||||||||||||

| content | x | x | |||||||||||||

| classes | |||||||||||||||

| system_class_count | |||||||||||||||

| display | x |

<'asc', 'desc'>

?sort=<'asc','desc'>

If multiple sort parameter are used, the first valid sort input will be used.

It does not matter if the words are uppercase or lowercase (i.e. DeSc or aSC), but the query only takes asc or desc as valid input. If no valid input is provided, the result is orders ASC.

<'id', 'class_code', 'name', 'description', 'created', 'modified', 'system_type', 'begin_from', 'begin_to', 'end_from', 'end_to'>

The column parameter declares which columns in the table are sorted with the sort parameter.

?column=<'id', 'class_code', 'name', 'description', 'created', 'modified', 'system_type', 'begin_from', 'begin_to', 'end_from', 'end_to'>

If multiple column parameter are used, a list is created, by the order in which the parameters are given (i.e. ?column=name&column=description&column=id will order by name, description and id).

It does not matter if the words are uppercase or lowercase (i.e. Name, ID, DeScrIPtioN or Class_Code). If no valid input is provided, the results are ordered by name.

<number>

The limit parameter declares how many results will returned.

?limit=<number>

If multiple limit parameter are used, the first valid limit input will be used. Limit only take positive numbers.

<=, !=, <, <=, >, >=, LIKE, IN, AND, OR, AND NOT, OR NOT>

The filter parameter is used to specify which entries should return.

?filter=<XXX>

Please note, that the filter values will translate directly in SQL. For example:

?filter=and|name|like|Ach&filter=or|id|gt|5432

AND e.name LIKE %%Ach%% OR e.id > 5432

?filter=or|id|gt|150&filter=anot|id|ne|200 ?filter=and|name|like|Ach

first=<id> OR last=<id> OR page=<int>

The page parameter will take any number as page number and provides the entities of this page.

The first parameter takes IDs and will show every entity after and including the named ID.

The last parameter takes IDs and will show every entity after the named ID.

?page=<int>

?first=<id>

?last=<id>

Page, first and last will only take numbers. First and last has to be a valid ID. The table will be sorted AND filtered before the pagination comes in place.

?page=4 ?last=220 ?first=219

<'when', 'types', 'relations', 'names', 'links', 'geometry', 'depictions', 'none'>

The show parameter will take in the key values of a json. If no value is given, every key will be filled. If a value is given, it only will show the types which are committed. If the parameter contains none, no additional keys/values will be shown. This will only work with the Linked Places Format.

?show=<'when', 'types', 'relations', 'names', 'links', 'geometry', 'depictions', 'none'>

For each value, a new parameter has to be set. The value will be matched against a list of keywords, so wrong input will be ignored.

?show=when ?show=types ?show=types&show=when ?show=none

lp, geojson, pretty-xml, n3, turtle, nt, xml

With the format parameter, the output format of an entity representation can be selected. lp stands for Linked Places Format, which is the standard selection. For information on other formats, please confer API Output Formats

?format=<lp, geojson, pretty-xml, n3, turtle, nt, xml>

Only the last format parameter counts as valid input. This parameter is not case-sensitive.

?format=lp ?format=geojson ?show=n3

<int>

The whole search query will be filtered by this Type ID. Multiple type_id parameters are valid and are connected with a logical OR connection.

?type_id=<id>

type_id only takes a valid type ID.

?type_id=<int>

<>

Returns a json with a number of the total count of the included entities.

?count

Only count will trigger the function. Count can have any numbers assigned to it, which makes no difference.

?count

<>

Will trigger the download of the result of the request path.

?download

Only download will trigger the function. Download can have anything assigned to it, but this will be discarded.

?download

<'en', 'de'>

Select the language, which content will be displayed.

?lang

Default value is None, which means the default language of the OpenAtlas instance is taken.

?lang ?lang=en ?lang=DE

gisAll, gisPointAll, gisPointSupers, gisPointSubs, gisPointSibling, gisLineAll, gisPolygonAll

Filter, which geometric entities will be retrieved through /geometric_entities. Multiple geometry parameters are valid. Be aware, this parameter is case-sensitive!

?geometry

The default value is gisAll. Be aware, this parameter is case-sensitive!

?geometry=gisPointSupers ?geometry=gisPolygonAll

The API basically can be accessed through two methods: Either from the user interface of an OpenAtlas application or, if the settings will allow it, from another application.

These endpoints can provide full information about one or more entities. The output format is the Linked Place Format(LPF). Alternatively, there is a simple GeoJSON format and multiple RDFs, derived from the LPF, available.

Retrieves a representation of an entity through the ID.

Retrieves a json with a list of entities based on their CIDOC CRM class code. The output contains a results and pagination key. All in OpenAtlas available codes can be found under OpenAtlas and CIDOC CRM. The result can be filtered, ordered and manipulated through different parameters. By default, results are ordered alphabetically, and 20 entities are shown.

Retrieves a json with a list of entities based on their OpenAtlas view name. Available categories can be found at OpenAtlas and CIDOC CRM. The result can be filtered, ordered and manipulated through different parameters. By default, results are ordered alphabetically and 20 entities are shown.

Retrieves a json with a list of entities based on their OpenAtlas system class. Available categories can be found at OpenAtlas and CIDOC CRM. The result can be filtered, ordered and manipulated through different parameters. By default, results are ordered alphabetically and 20 entities are shown.

With the query endpoint, one can combine the three endpoints above in a single query. Each request has to be a new parameter. Possible parameters are:

For more details of the different queries, please consult the associated section. The result can be filtered, ordered and manipulated through different parameters. By default, results are ordered alphabetically, and 20 entities are shown.

Retrieves the latest entries made in the OpenAtlas database. The number represents the amount of entities retrieved. /latest can be any number between and including 1 and 100.

Retrieves a list of entities, which are linked to the entity with the given id. The result can be filtered, ordered and manipulated through different parameters. By default, results are ordered alphabetically and 20 entities are shown.

Retrieves a list of entities based on their OpenAtlas type. A possible id can be obtained, for example, by the type_tree or node_overview endpoint. The result can be filtered, ordered and manipulated through different parameters. By default, results are ordered alphabetically and 20 entities are shown.

Retrieves a list of entities based on their OpenAtlas type. This also includes all entities, which are connected to an subtype. A possible id can be obtained, for example by the type_tree or node_overview endpoint. The result can be filtered, ordered and manipulated through different parameters. By default, results are ordered alphabetically, and 20 entities are shown.

Retrieves a detailed JSON list of all OpenAtlas types. This includes also includes a list of children of a type

Retrieves a JSON list of all OpenAtlas types sorted by custom, places, standard and value.

Takes only a valid place (E18) ID. Retrieves a list of the given place and all of its subunits. This endpoint provides a special Thanados Format. With the format=xml parameter, an XML can be created,

Provides a list of all available system classes, their CIDOC CRM mapping, which view they belong, which icon is used and the English name

Retrieves a json of the content (Intro, Legal Notice, Contact and the size for processed images) from the OpenAtlas instance. The language can be chosen with the lang parameter (en or de).

Retrieves a list of all selected geometries in the database in a standard GeoJSON format. This endpoint should be used for map overviews.

Retrieves a list of how many entities, a system class has.

Provides the image of the requested ID. Be aware, the image will only be displayed if:

Not all endpoints support all parameters. Also, some endpoints has additional unique parameter options, which are described at their section.

| path\parameter | type_id | format | page | sort | column | limit | search | first | last | show | count | download | lang | geometry | image_size | export |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| entity | x | x | x | x | ||||||||||||

| code | x | x | x | x | x | x | x | x | x | x | x | x | x | |||

| system_class | x | x | x | x | x | x | x | x | x | x | x | x | x | |||

| entities_linked_to_entity | x | x | x | x | x | x | x | x | x | x | x | x | x | |||

| type_entities | x | x | x | x | x | x | x | x | x | x | x | x | x | |||

| type_entities_all | x | x | x | x | x | x | x | x | x | x | x | x | x | |||

| class | x | x | x | x | x | x | x | x | x | x | x | x | x | |||

| latest | x | x | x | x | x | x | x | x | x | x | x | x | x | |||

| query | x | x | x | x | x | x | x | x | x | x | x | x | x | |||

| type_entities | x | x | x | |||||||||||||

| type_entities_all | x | x | x | |||||||||||||

| subunit | x | x | ||||||||||||||

| subunit_hierarchy | x | x | ||||||||||||||

| type_tree | x | x | ||||||||||||||

| node_overview | x | x | ||||||||||||||

| geometric_entities | x | x | x | |||||||||||||

| content | x | x | ||||||||||||||

| classes | ||||||||||||||||

| system_class_count | ||||||||||||||||

| display | x |

<'asc', 'desc'>

?sort=<'asc','desc'>

If multiple sort parameter are used, the first valid sort input will be used.

It does not matter if the words are uppercase or lowercase (i.e. DeSc or aSC), but the query only takes asc or desc as valid input. If no valid input is provided, the result is orders ASC.

<'id', 'name', 'cidoc_class', 'system_class'>

The column parameter declares which columns in the table are sorted with the sort parameter.

?column=<'id', 'name', 'cidoc_class', 'system_class'>

If multiple column parameter are used, a list is created, by the order in which the parameters are given (i.e. ?column=name&column=id will order by name and then by id).

It does not matter if the words are uppercase or lowercase (i.e. Name, ID, DeScrIPtioN or Class_Code). If no valid input is provided, the results are ordered by name.

<number>

The limit parameter declares how many results will return. limit=0 will return all entities.

?limit=<number>

If multiple limit parameter are used, the first valid limit input will be used. Limit only take positive numbers.

The search parameter provides a tool to filter and search the data with logical operators.

Search parameter:

?search={}

Logical operators:

These are not mandatory. _or _ is the standard value.

and, or

Compare operators:

equal, notEqual, like(1), greaterThan(2), greaterThanEqual(2), lesserThan(2), lesserThanEqual(2) (1) Only string categories (2) Only beginFrom, beginTo, endFrom, endTo, valueTypeID

Filterable categories:

entityName, entityDescription, entityAliases, entityCidocClass, entitySystemClass, entityID, typeID, valueTypeID, typeIDWithSubs, typeName, beginFrom, beginTo, endFrom, endTo, relationToID

The search parameter takes a JSON as value. A key has to be a filterable category followed by a list/array. This list need to have again JSON values as items. There can be multiple search parameters. E.g:

?search={"typeID":[{"operator":"equal","values":[123456]}], "typeName":[{"operator":"like","values":["Chain", "Bracelet", "Amule"],"logicalOperator":"and"}]}&search={"typeName":[{"operator":"equal","values":["Gold"]}], "beginFrom":[{"operator":"lesserThan","values":["0850-05-12"],"logicalOperator":"and"}]}

Every JSON in a search parameter field is logical connected with AND. E.g:

?search={A:[{X}, {Y}], B: [M]} => Entities containing A(X and Y) and B(M)

Each search parameter is logical connected with OR. E.g:

?search={A:[{X}, {Y}]}&search={A:[{M}]} => Entities containing A(X and Y) or A(M)

Within the list of a key, there are multiple queries possible. A query contains a compare operator, the values to be searched and a logical operator, how the values should be handled. E.g:

{"operator":"equal","values":[123456],"logicalOperator":"or"}

{"operator":"notEqual","values":["string", "otherString"],"logicalOperator":"and"}

{"operator":"lesserThan","values":["0850-05-12"],"logicalOperator":"and"}

{"operator":"like","values":["Gol", "Amul"],"logicalOperator":"and"}

Values has to be a list of items. The items can be either a string, an integer or a tuple (see Note). Strings need to be marked with "" or '', while integers doesn't allow this.

Note: the category valueTypeID can search for values of a type ID. But it takes one or more two valued Tuple as list entry: (x,y). x is the type id and y is the searched value. This can be an int or a float. E.g:

{"operator":"lesserThan","values":[(3142,543.3)],"logicalOperator":"and"}

The compare operators work like the mathematical operators. equal x=y, notEqual x!=y, greaterThan x>y , greaterThanEqual x>=y, lesserThan x<y, lesserThanEqual x<=y. The like operator searches for occurrence of the string, so a match can also occur in the middle of a word.

With the example above, we can textualize the outcome:

?search={"typeID":[{"operator":"equal","values":[123456],"logicalOperator":"or"}, "typeName":[{"operator":"notEqual","values":["Chain", "Burial object"],"logicalOperator":"and"]}&search={"typeName":[{"operator":"like","values":["Gol"],"logicalOperator":"or"}]}

Get entities which has the typeID 123456 AND NOT the types called "Chain" AND "Burial object", OR all entities which has the typeName with "Gold" in the type name.

first=<id> OR last=<id> OR page=<int>

The page parameter will take any number as page number and provides the entities of this page.

The first parameter takes IDs and will show every entity after and including the named ID.

The last parameter takes IDs and will show every entity after the named ID.

?page=<int>

?first=<id>

?last=<id>

Page, first and last will only take numbers. First and last has to be a valid ID. The table will be sorted AND filtered before the pagination comes in place.

?page=4 ?last=220 ?first=219

<'when', 'types', 'relations', 'names', 'links', 'geometry', 'depictions', 'none'>

The show parameter will take in the key values of a json. If no value is given, every key will be filled. If a value is given, it only will show the types which are committed. If the parameter contains none, no additional keys/values will be shown. This will only work with the Linked Places Format.

?show=<'when', 'types', 'relations', 'names', 'links', 'geometry', 'depictions', 'none'>

For each value, a new parameter has to be set. The value will be matched against a list of keywords, so wrong input will be ignored.

?show=when ?show=types ?show=types&show=when ?show=none

<'P2', 'P67', 'P53', 'OA7', ...>

The relation_type parameter will take the property code from CIDOC CRM (P) and the OpenAtlas codes (OA) as values. The relation json field from the Linked Places Format will only show relations with the given code. This can significantly decrease the payload.

For each value, a new parameter has to be set. The value will be matched against the list of possible property codes. Wrong input will be ignored.

?relation_type=P2 ?relation_type=P67 ?relation_type=2&relation_type=OA7

lp, geojson, pretty-xml, n3, turtle, nt, xml

With the format parameter, the output format of an entity representation can be selected. lp stands for Linked Places Format, which is the standard selection. For information on other formats, please confer API Output Formats

?format=<lp, geojson, pretty-xml, n3, turtle, nt, xml>

Only the last format parameter counts as valid input. This parameter is not case-sensitive.

?format=lp ?format=geojson ?show=n3

<int>

The whole search query will be filtered by this Type ID. Multiple type_id parameters are valid and are connected with a logical OR connection.

?type_id=<id>

type_id only takes a valid type ID.

?type_id=<int>

<>

Returns a json with a number of the total count of the included entities.

?count

Only count will trigger the function. Count can have any numbers assigned to it, which makes no difference.

?count

<>

Will trigger the download of the result of the request path.

?download

Only download will trigger the function. Download can have anything assigned to it, but this will be discarded.

?download

<'en', 'de'>

Select the language, which content will be displayed.

?lang

Default value is None, which means the default language of the OpenAtlas instance is taken.

?lang ?lang=en ?lang=DE

gisAll, gisPointAll, gisPointSupers, gisPointSubs, gisPointSibling, gisLineAll, gisPolygonAll

Filter, which geometric entities will be retrieved through /geometric_entities. Multiple geometry parameters are valid. Be aware, this parameter is case-sensitive!

?geometry

The default value is gisAll. Be aware, this parameter is case-sensitive!

?geometry=gisPointSupers ?geometry=gisPolygonAll

csv, csvNetwork

Export the result in the given format as download. csv is one single CSV file of the result. csvNetwork are multiple CSV files, especially used for network analysis. So there are for each system class a CSV, describing the entities, a link.csv where the links between the entities are shown and a geometry.csv containing the geometries.

?export

?export=csv ?export=csvNetwork

/api/0.3/query will be deleted, the idea is, that all endpoints behind the resource entities can be stacked behind with additional parameter, e.g.:

/api/entities/cidoc_code/E18?sort=desc/type/23/entity/512

If this is not possible in any form, query would come back.

This page is a discussion base and documentation about the authentication system of the API.

To be filled.... (What is Token-Based and CORS, advantages and disadvantages, usage, flask compatibly?)

This is information is currently deprecated but planned to be updated.

For the API we want to create a more detailed error model. The error message should be machine- and human-readable, therefore we use json format as response language.

One example could be:

{

title: "Forbidden",

status: 403,

detail: "You don't have the permission to access the requested resource. Please authenticate with the server, either through login via the user interface or token based authentication.",

timestamp: "Fri, 29 May 2020 10:13:22 GMT",

}

As a RFC draft for API error messages states (https://tools.ietf.org/html/draft-nottingham-http-problem-07):

Following error codes will be caught by the API error handler:

| Code | Description | Detail | Error Message |

|---|---|---|---|

| 400 | Bad Request | Client sent an invalid request — such as lacking required request body or parameter | The request is invalid. The body or parameters are wrong. |

| 401 | Unauthorized | Client failed to authenticate with the server | You failed to authenticate with the server. |

| 403 | Forbidden | Client authenticated but does not have permission to access the requested resource | You don't have the permission to access the requested resource. Please authenticate with the server, either through login via the user interface or token based authentication. |

| 404 | Not Found | The requested resource does not exist | Something went wrong! Maybe only digits are allowed. Please check the URL. |

| 404a | Not Found | The requested resource does not exist | The requested entity doesn't exist. Try another ID. |

| 404b | Not Found | The requested resource does not exist | The syntax is incorrect. Only digits are allowed. For further usage, please confer the help page. |

| 404c | Not Found | The requested resource does not exist | The syntax is incorrect. Valid codes are: actor, event, place, source, reference and object. For further usage, please confer the help page. |

| 404d | Not Found | The requested resource does not exist | The syntax is incorrect. These class code is not supported. For the classes please confer the model. |

| 404e | Not Found | The requested resource does not exist | The syntax is incorrect. Only integers between 1 and 100 are allowed. |

| 404f | Not Found | The requested resource does not exist | The syntax is incorrect. Only valid operators are allowed. |

| 405 | Invalid Method | The method is not available | The method used is not supported. Right now only GET is allowed. |

Participants: Bernhard Koschicek, Alexander Watzinger, Nina Brundke, Christoph Hoffmann, Stefan Eichert and special guest Smilla

Location: ACDH-CH, Alte Burse, Sonnenfelsgasse 19, 1010 Vienna

Time: 2020-08-09, 14:00

After we discussed the excellent presented and extensive recent development we decided to switch to a more practical approach. Christoph and Stefan will write issues for the API to satisfy their needs for presentation sites so that we can see and test the API in action.

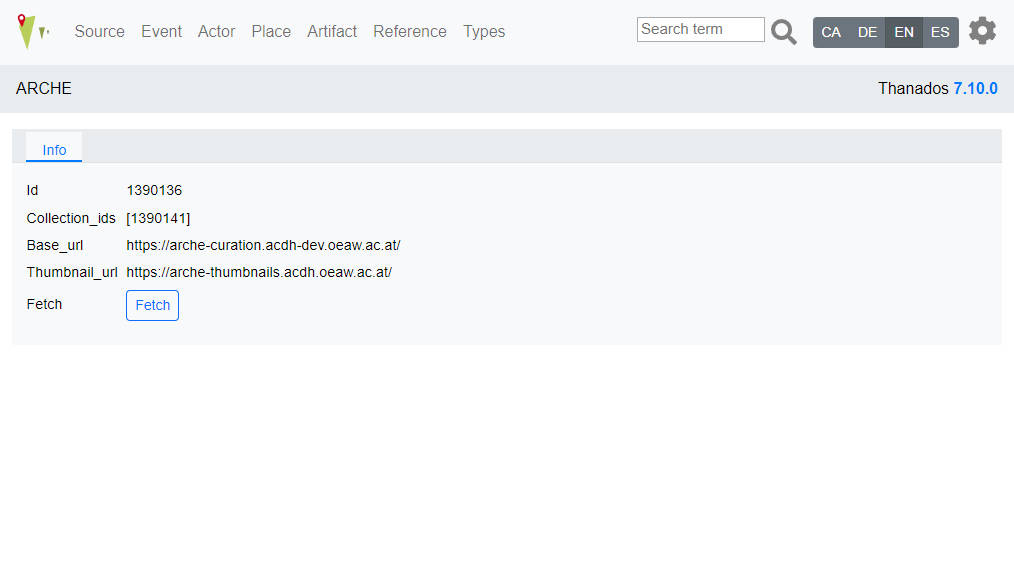

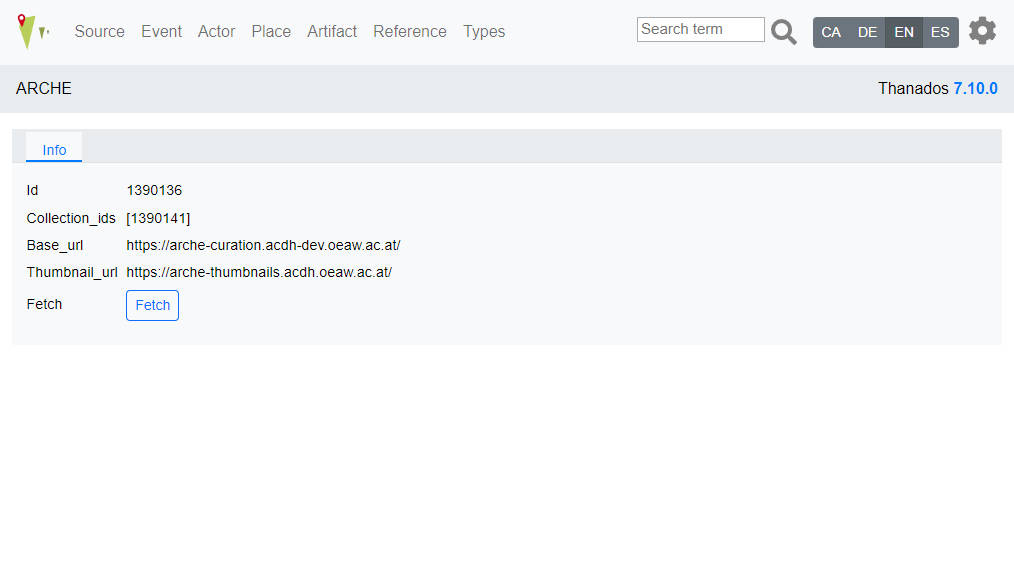

The ARCHE import is deactivated by default. To enable it, add the following dictionary to instance/production.py:

ARCHE = {

'id': 1390136, # ID of the Top Collection (acdh:TopCollection)

'collection_ids': [1390141], # ID of different collections containing metadata.json files (acdh:Collection)

'base_url': 'https://arche-curation.acdh-dev.oeaw.ac.at/', # Base URL to get data from

'thumbnail_url': 'https://arche-thumbnails.acdh.oeaw.ac.at/' # URL of ARCHE thumbnail service, no changes needed}

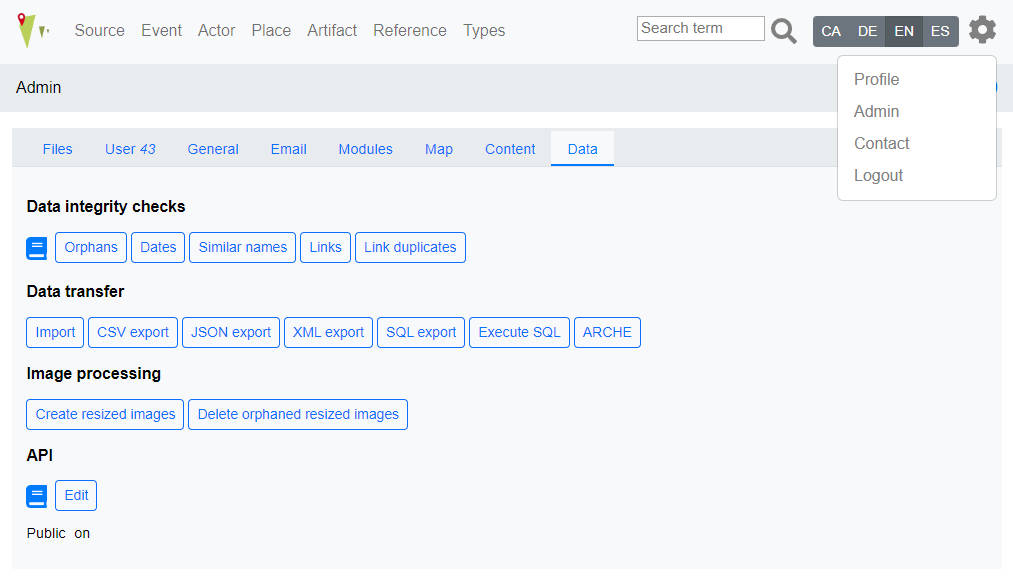

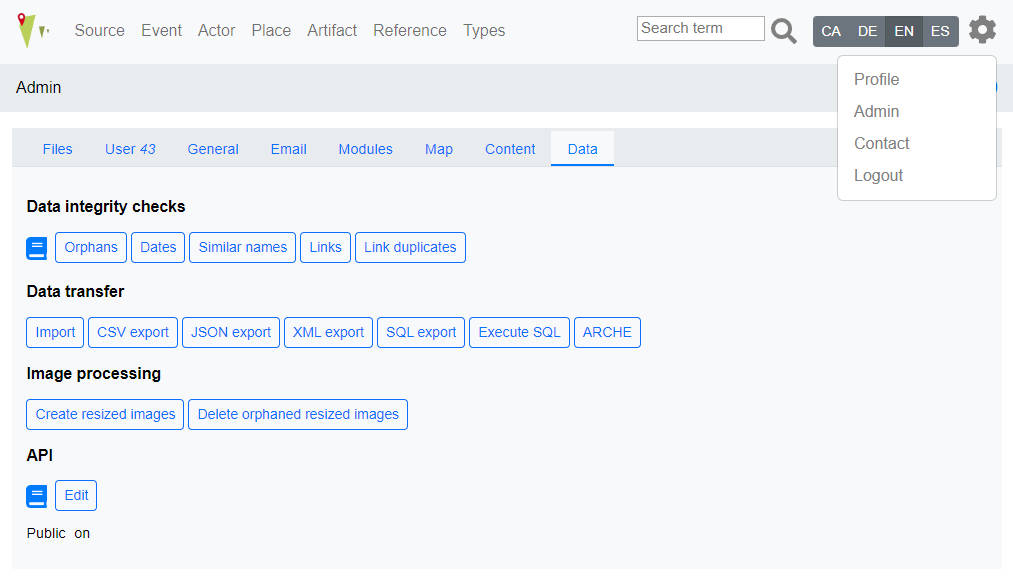

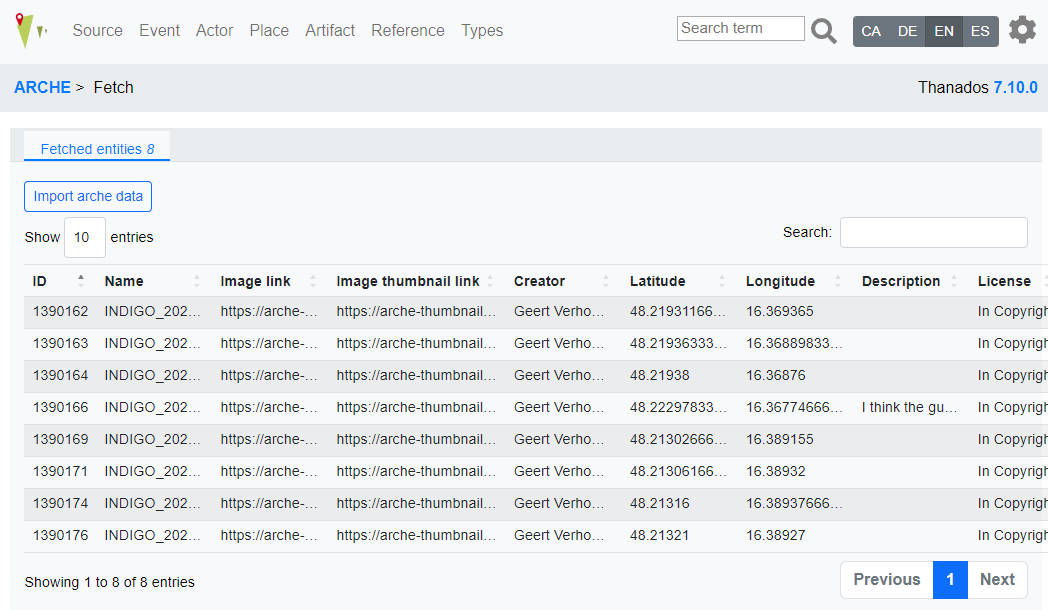

If the feature is enabled, every user can see an additional button in Admin -> Data, called ARCHE, which lead to an information page of data provided at instance/production.py.

If the user belongs to the manager user group, a button called Fetch is displayed. Pressing Fetch will fetch data from ARCHE. This will check if the data has already been imported into OpenAtlas (based on the artifact). If an entry is not present, a summary table is displayed with the graffiti that will be imported.

By pressing the button Import ARCHE data the data will be imported and if necessary new types, persons, etc. will be created.

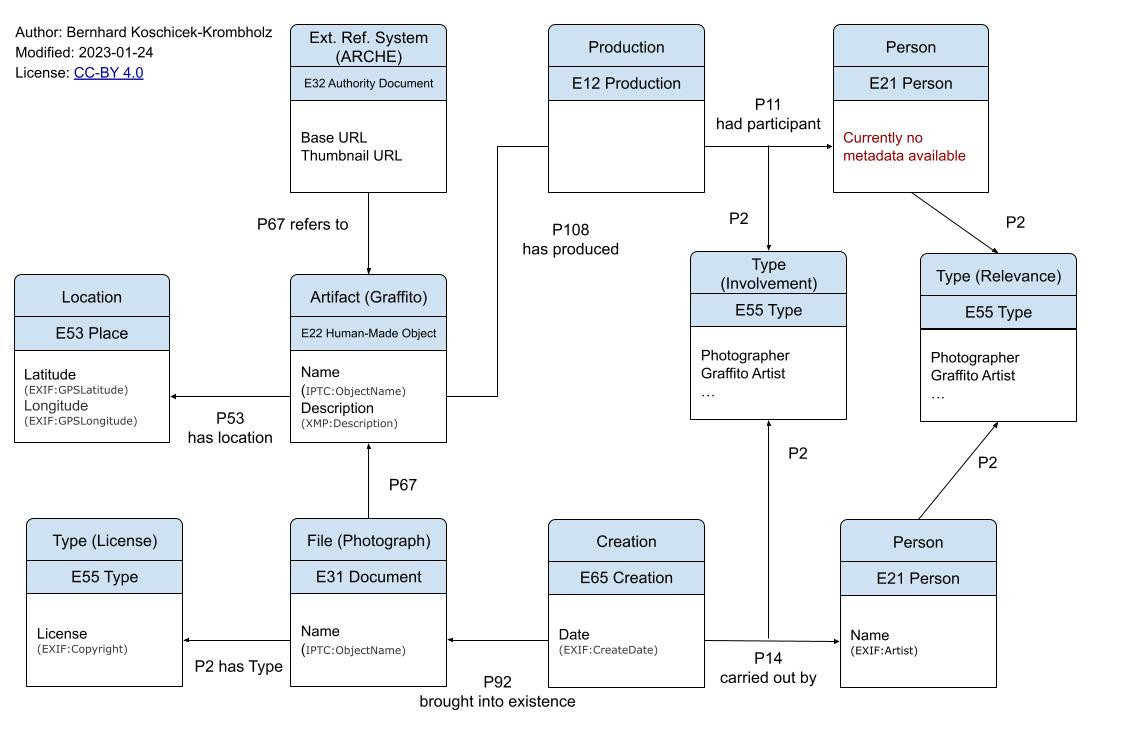

All data is gathered from [IMAGE_NAME]_metadata.json:

'image_id': image_id (ARCHE) 'image_link': image_url (ARCHE) 'image_link_thumbnail': thumbnail_url (ARCHE) 'creator': EXIF:Artist 'latitude': EXIF:GPSLatitude 'longitude': EXIF:GPSLongitude 'description': XMP:Description 'name': IPTC:ObjectName 'license': EXIF:Copyright 'date': EXIF:CreateDate

Data provided from production.py:

ARCHE = {

'id': 1390136,

'collection_ids': [1390141],

'base_url': 'https://arche-curation.acdh-dev.oeaw.ac.at/',

'thumbnail_url': 'https://arche-thumbnails.acdh.oeaw.ac.at/'}

¶

¶Documents archived for historical reasons.

Linked with: P117 occurs during

Domain: E5, E6, E8, E12

Range: E5, E6, E8, E12

Linked with: P107 has current or former member

Domain: E74, E40

Range: E21, E74, E40

Entries with * are obligatory and cannot be altered. Others are facultative examples and are editable/extendable

Types E55 are linked with E55 via P127 (has broader term)

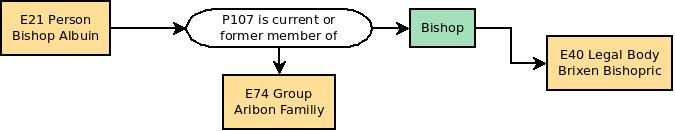

Definitions of an actor's function within a group or legal body. An actor can for example be member of a legal body and this membership is defined by a certain function/role during a certain period of time. E.g. Actor "Charlemagne" is member of the legal body "Frankish Reign" from 768-814 in the function of "King" and he is member of the legal body "Roman Empire" from 800 to 814 in the function "Emperor".

Bishop Abbot Pope King Emperor Count Duke

Types for Sources

Charter Testament Letter Contract

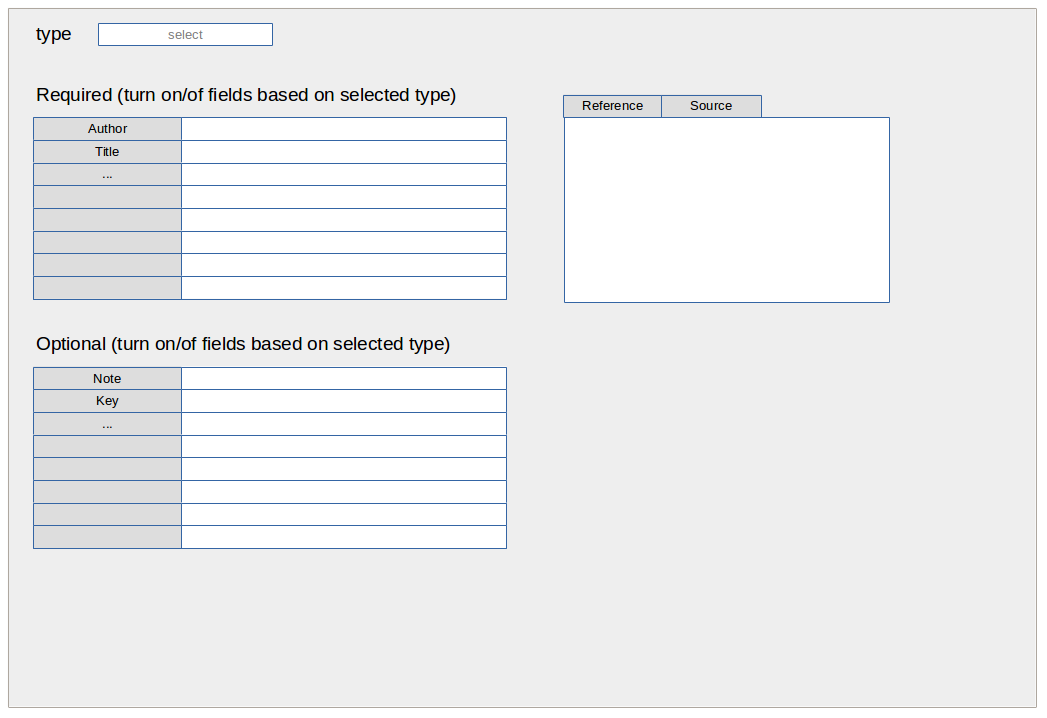

Definitions for the type of bibliographic items like "articles", "books", "proceedings" etc.

Article Book Inbook Mastersthesis Phdthesis unpublished

Definitions for the type of Source Editions like "Charter Edition" etc.

Charter Edition Letter Edition Chronicle Edition ...

Source Content* Source Original Text* Source Translation* Comment*

Categories for the types of information carriers. E.g. to determine if the physical manifestation of a medieval charter is an original, a later copy etc.

Original Document Copy of Document

_Definitions of relationships between one actor and another. These relationships can be directional (e.g. parent of/child of: actor A is the father of actor B while actor B is the son of actor A) or be the same in both directions (e.g. friend of: actor A is friend of actor B and vice versa actor B is friend of actor A) _

Kindredship Parent of (Child of) Social Friend of Enemy of Mentor of (Student of) Political Ally of Leader of (Retinue of) Economical Provider of (Customer of)

Exact date value From date value To date value

Exact position Near to a known position Within a known area

Categories for persons' gender

Female Male

Types for Places sites-example

Boundary Mark Burial Site Economic Site Infrastructure Military Facility Ritual Site Settlement Topographical Entity

Types of events

Change of Property Donation Sale Exchange Conflict Battle Raid

Categories to define the involvement of an actor within an event. E.g. "Napoleon" participated in the event "Invasion of Russia" as "Commander"

Creator Sponsor Victim Offender

E53 linked via P89 "falls within/contains" to E53 - e.g. Austria contains Wien/Wien falls within Austria

Administrative Units

Austria

Wien

Kärnten

Niederösterreich

Oberösterreich

Salzburg

Tirol

Steiermark

Vorarlberg

Burgenland

Germany

Italy

Czech Republic

Slovakia

Slovenia

E55 entities (but listed here as they belong to places)

used to determine the type of place e.g. country, province, district... Uses national terms due to each country's different systems

Country Bundesland Bezirk Gemeinde Katastralgemeinde Regierungsbezirk Landkreis Gemarkung Regioni Comune Province

E53 entities. These Places are for example historical regions like the "Medieval Kingdom of Serbia" or the early medieval "Duchy of Bavaria".

Historical Places Carantania Marcha Orientalis Comitatus Iauntal Kingdom of Serbia

For the (graphical) anthropological interface (#1473) the bones bone parts have to be recorded see concept

Initial meeting: 2024-12-09

Participants: Alex, Bernhard, Nina

Image API Server is responsible to deliver the images.

Since OpenAtlas relies on Debian packages, we recommend to use IIPImage as IIIF Image API. But any other working IIIF Image API Server can be used, if it can handle Tiled Multi-Resolution TIFF and uses a folder to handle the images.

For installation of the IIPImage server see install notes of OpenAtlas.

java -version

-bash: java: command not found

sudo apt install default-jre

cd /var/www

wget https://github.com/cantaloupe-project/cantaloupe/releases/download/v5.0.5/cantaloupe-5.0.5.zip

7z x cantaloupe-5.0.5.zip

mv cantaloupe-5.0.5 cantaloupe

cd cantaloupe

cp cantaloupe.properties.sample cantaloupe.properties

vim cantaloupe.properties

FilesystemSource.BasicLookupStrategy.path_prefix = /var/www/iiif/

# Enables the Control Panel, at /admin.

endpoint.admin.enabled = true

endpoint.admin.username = admin

endpoint.admin.secret = password

sudo a2enmod headers

sudo a2enmod proxy_http

sudo vim /etc/apache2/sites-available/cantaloupe.conf

<VirtualHost *:80>

# X-Forwarded-Host will be set automatically by the web server.

RequestHeader set X-Forwarded-Proto "https"

RequestHeader set X-Forwarded-Port "80"

RequestHeader set X-Forwarded-Path /

ServerName apache-server

AllowEncodedSlashes NoDecode

ErrorLog /var/log/apache2/cantaloupe_error.log

CustomLog /var/log/apache2/cantaloupe_access.log combined

ProxyPass / http:// YOUR-DOMAIN.at:8182/ nocanon

ProxyPassReverse / http://YOUR-DOMAIN.at:8182/

ProxyPassReverseCookieDomain YOUR-DOMAIN.at apache-server

ProxyPreserveHost on

</VirtualHost>

sudo a2ensite cantaloupe.conf

sudo service apache2 restart

sudo mkdir /etc/cantaloupe/ openssl pkcs12 -export -out /etc/cantaloupe/ssl-certificate.pfx -inkey /etc/letsencrypt/live/YOUR-DOMAIN.at/privkey.pem -in /etc/letsencrypt/live/YOUR-DOMAIN.at/cert.pem -certfile /etc/letsencrypt/live/YOUR-DOMAIN.at/fullchain.pem sudo chown bkoschicek:www-data /etc/cantaloupe/ssl-certificate.pfx

# !! Configures the HTTPS server. (Standalone mode only.)

https.enabled = true

https.host = 0.0.0.0

https.port = 8183

# !! Available values are `JKS` and `PKCS12`. (Standalone mode only.)

https.key_store_type = PKCS12

https.key_store_password = PASSWORD

https.key_store_path = /etc/cantaloupe/ssl-certificate.pfx

https.key_password = PASSWORD

sudo vim /etc/systemd/system/cantaloupe.service

[Unit] Description=Cantaloupe Image Server After=network.target [Service] ExecStart=/usr/bin/java -Dcantaloupe.config=/var/www/cantaloupe/cantaloupe.properties -Xmx2g -jar /var/www/cantaloupe/cantaloupe-5.0.5.jar Restart=on-failure User=root WorkingDirectory=/var/www/cantaloupe/ [Install] WantedBy=multi-user.target

sudo systemctl daemon-reload

sudo systemctl enable cantaloupe.service

sudo systemctl start cantaloupe.service

Since IIPServer is not so easy to install on Windows, and we don't really need it for development, I suggest to work with Cantaloupe to get an on-the-fly IIIF server.

scoop install main/libvips

FilesystemSource.BasicLookupStrategy.path_prefix = C:\Users\bkoschicek\PycharmProjects\iiif\

java -Dcantaloupe.config=C:/cantaloupe-5.0.5/cantaloupe.properties -Xmx2g -jar cantaloupe-5.0.5.jar

http://localhost:8182/iiif/2/image.jpg/info.json

http://localhost:8182/iiif/2/image.jpg/full/full/0/default.jpg

OpenAtlas uses several shortcuts in order to simplify connections between entities that are always used the same way.

These shortcuts are named OA + the respective number. Currently OpenAtlas uses 3 shortcuts:

E39 (Actor) - P11i (participated in) - E5 (Event) - P11 (had participant) - E39 (Actor)

The connecting event is defined by an entity of class E55 (Type):

[Relationship from Stefan to Joachim (E5)] has type [Son to Father (E55)]

E77 (Persistent Item) - P92i (was brought into existence by) - E63 (Beginning of Existence) - P7 (took place at) - E53 (Place)

E77 (Persistent Item) - P93i (was taken out of existence by) - E64 (End of Existence) - P7 (took place at) - E53 (Place)

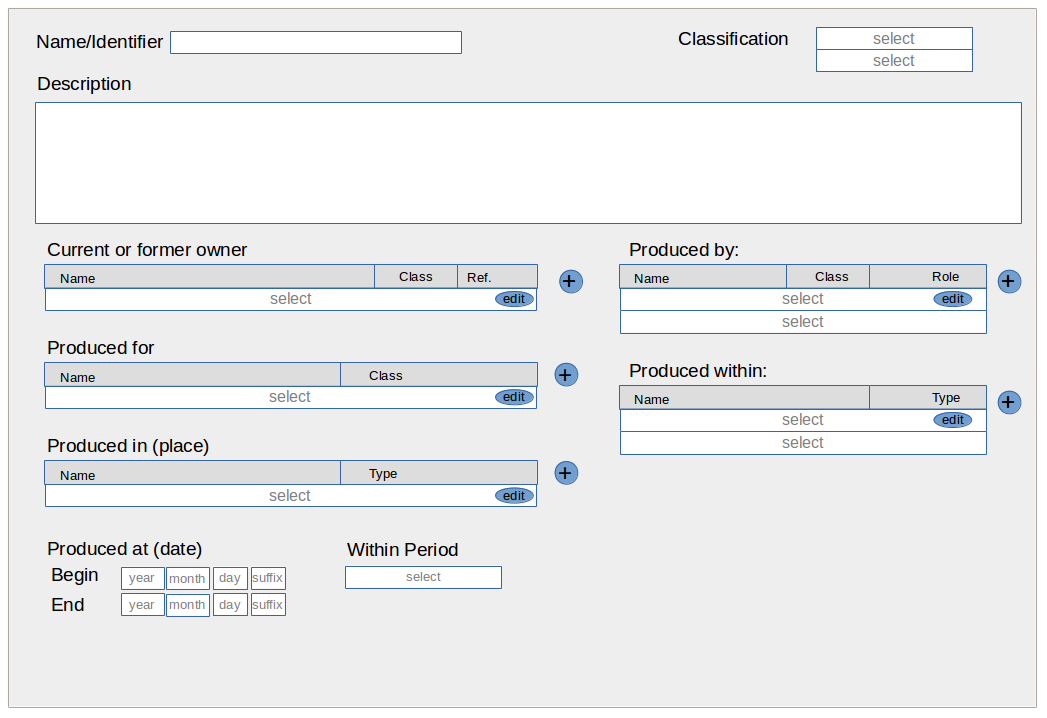

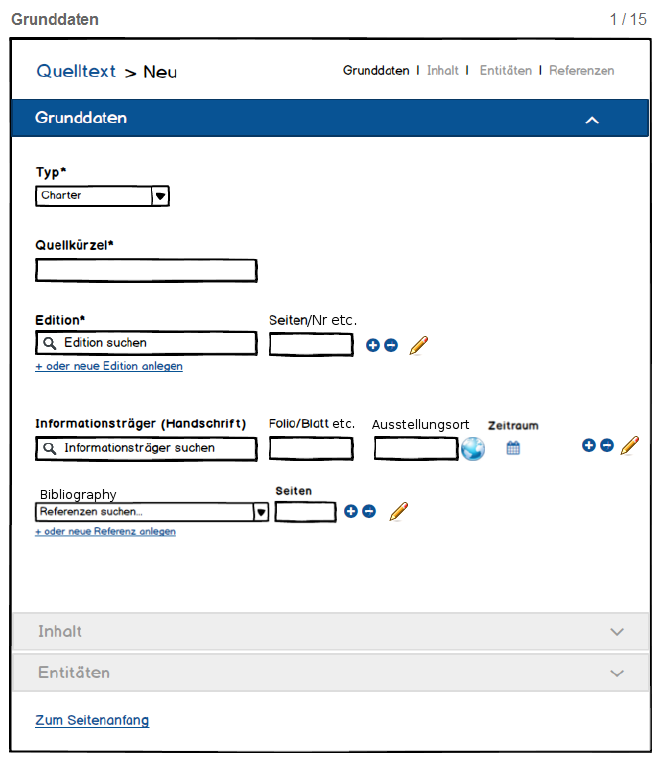

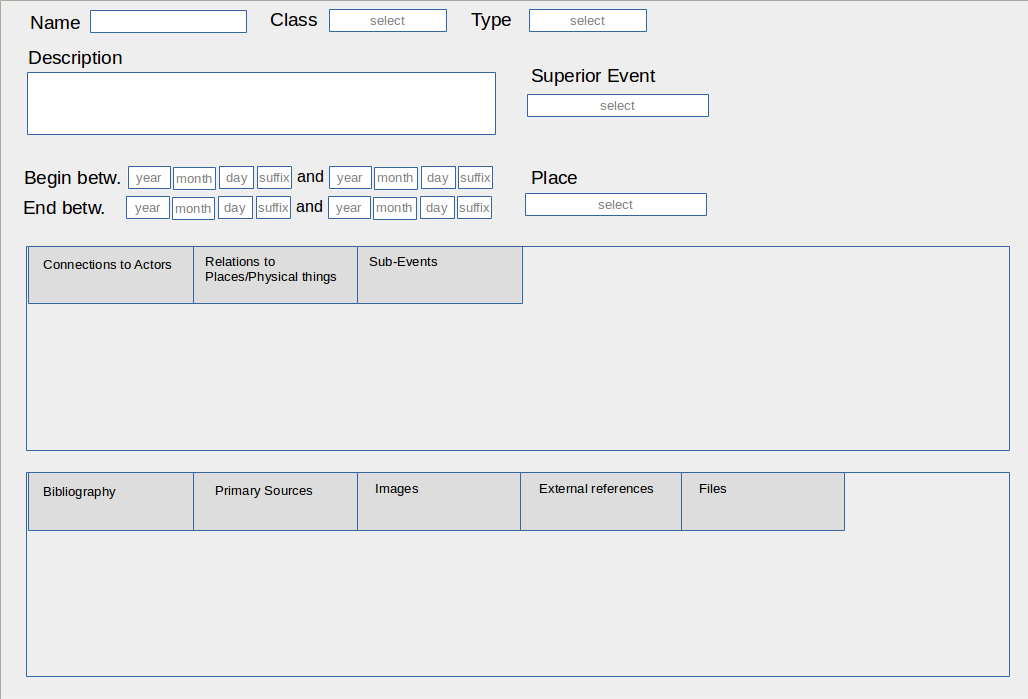

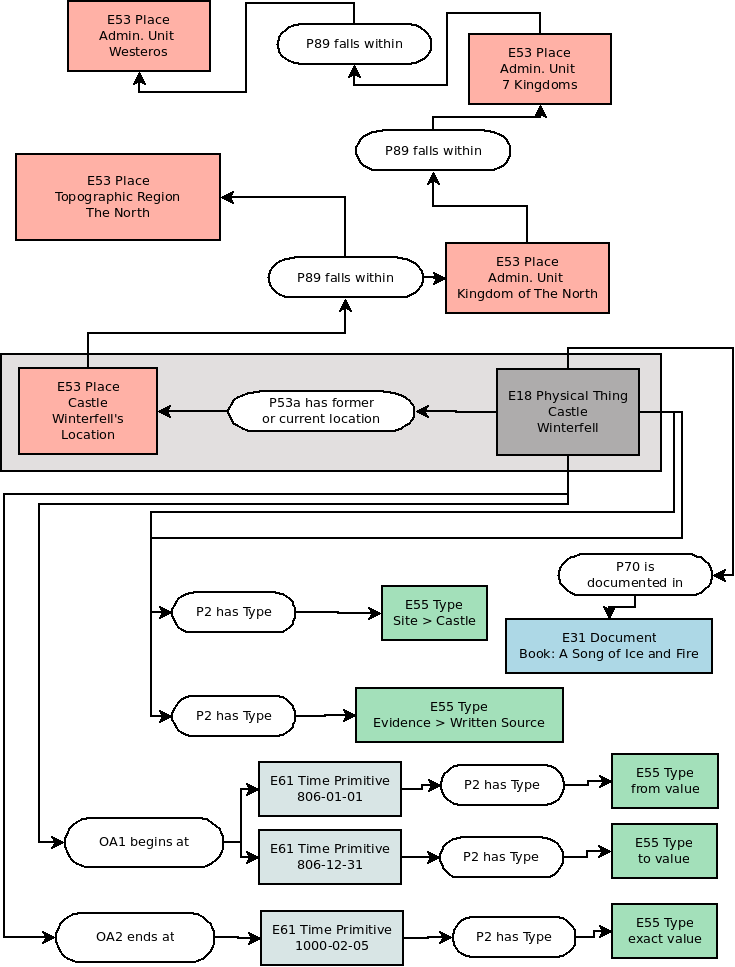

In the following section some mapping examples are discussed. Please be aware of the fact, that they may vary from the current development version of OpenAtlas.

In this section various data-mappings, as used in OpenAtlas, are presented. They use Classes and Properites from the CIDOC CRM (http://www.cidoc-crm.org/) to map the information. If custom properties or shortcuts are used they are named "OA" with a certain number. They are described in detail in the Custom Properties and Shortcuts section. Within OpenAtlas it is also possible to specify various additional attributes a property can have. E.g. the timespan in which this property links two entities or for example the role an actor has within a certain group.

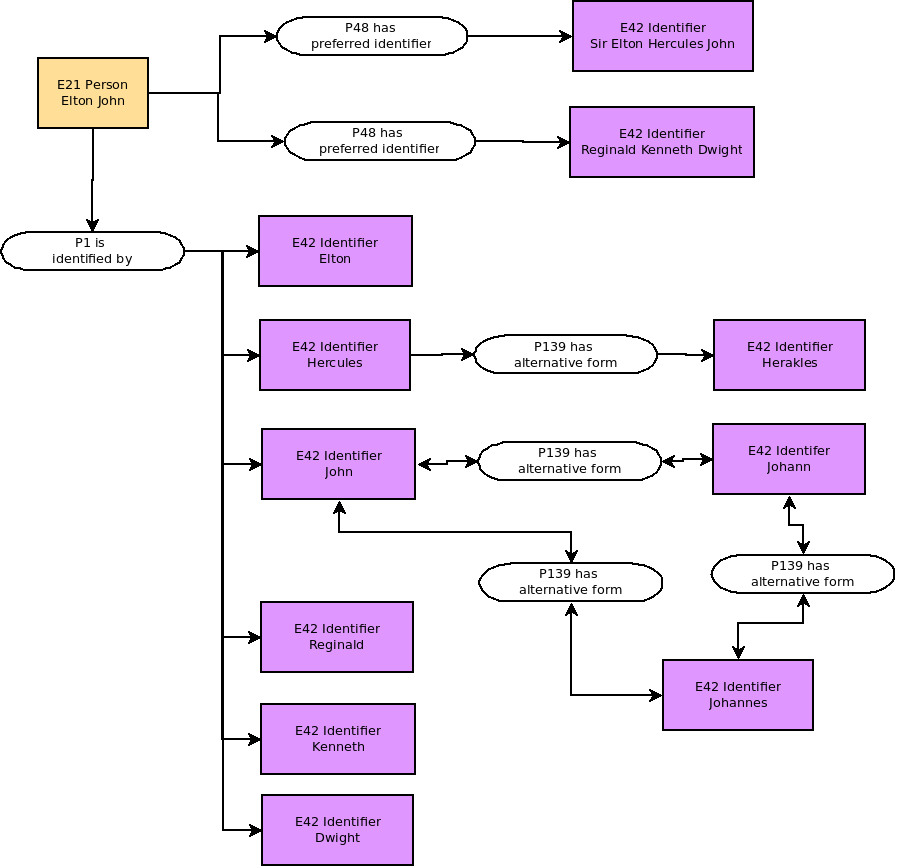

Every entity recorded in OpenAtlas can have a main name respectively a primary identifier, that is stored via P1 ("is identified by" or sub-property) from the entity to E41 ("Appelation" or sub-class). This name or identifier can consist of one ore more words and can contain - e.g. in case of an actor - a first name, second name and surname as well as additional informations like titles: "Sir Elton Hercules John".

If an entity has alternative names like stagenames or pseudonyms they are also documented using a P1 (is identified by) linked to E41 (Appelation): "Reginald Kenneth Dwight" (=Birthname of Elton John). Another example would be "Charlemagne", "Carolus Magnus" and "Karl der Große" that are recorded with the described properties.

Alternative forms of names are mapped with P139: Two E42 identifier entities linked via P139 (has alternative form).

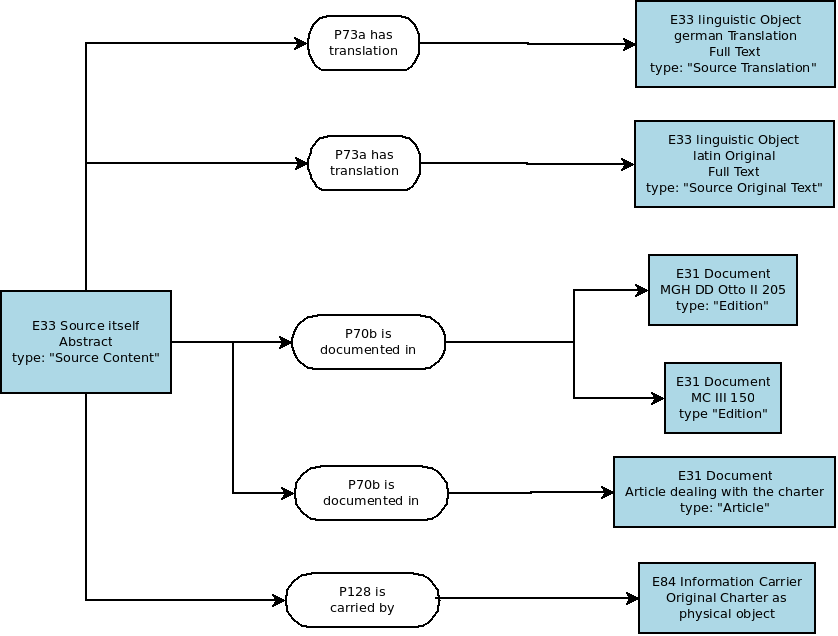

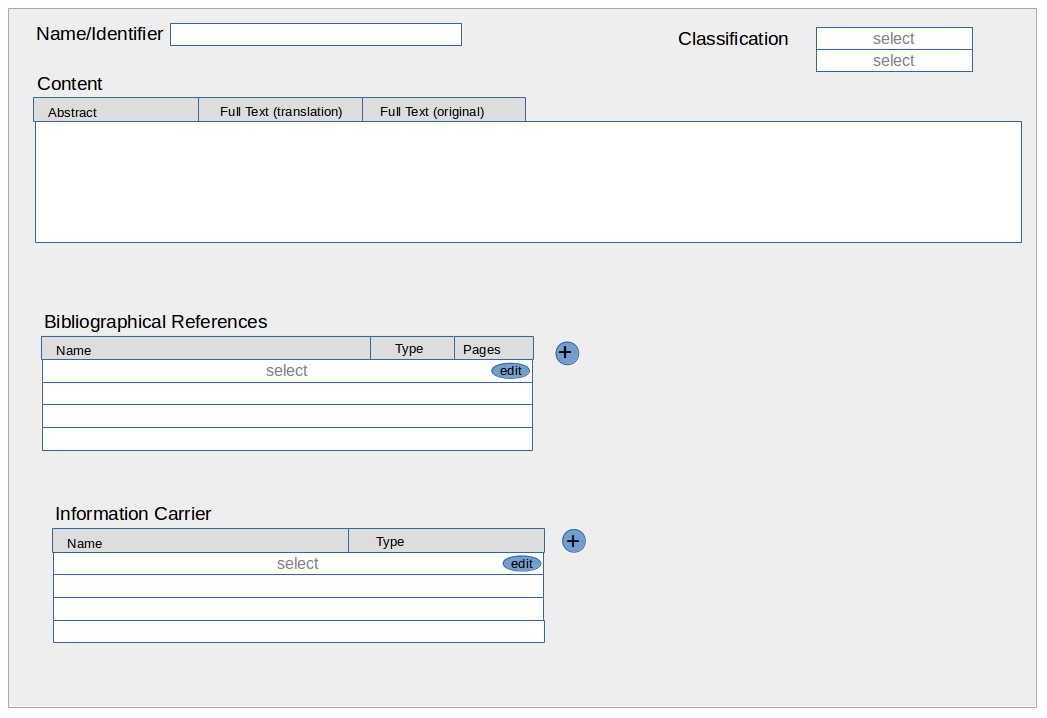

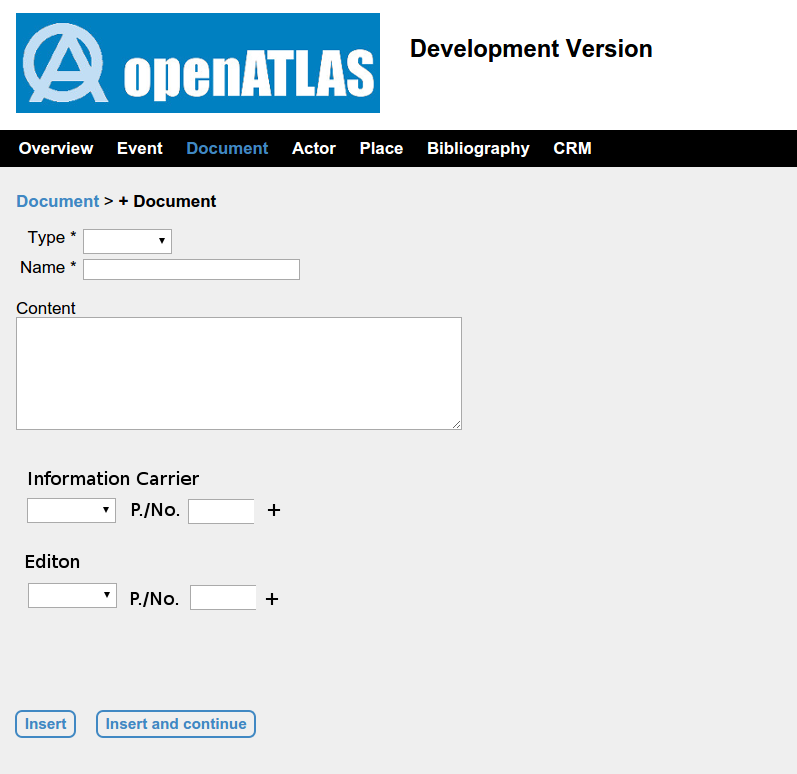

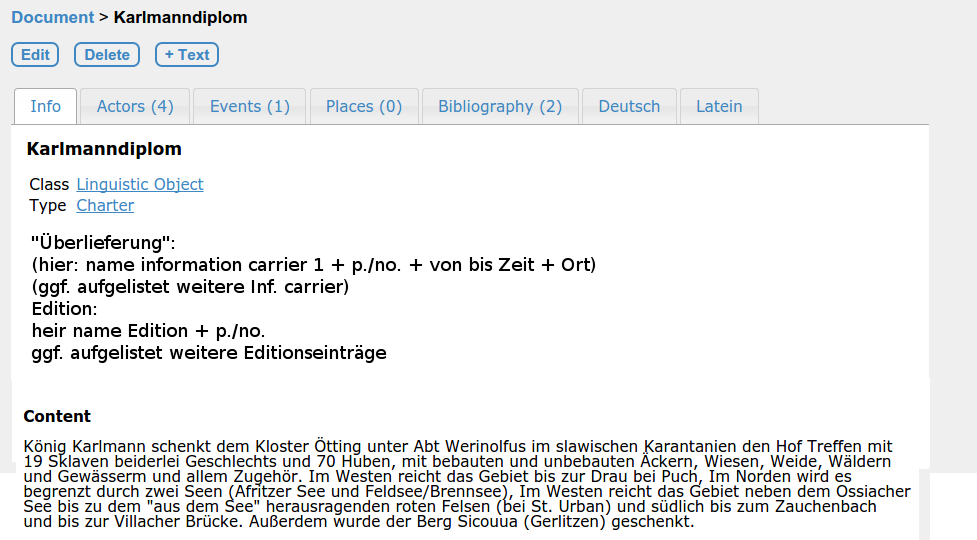

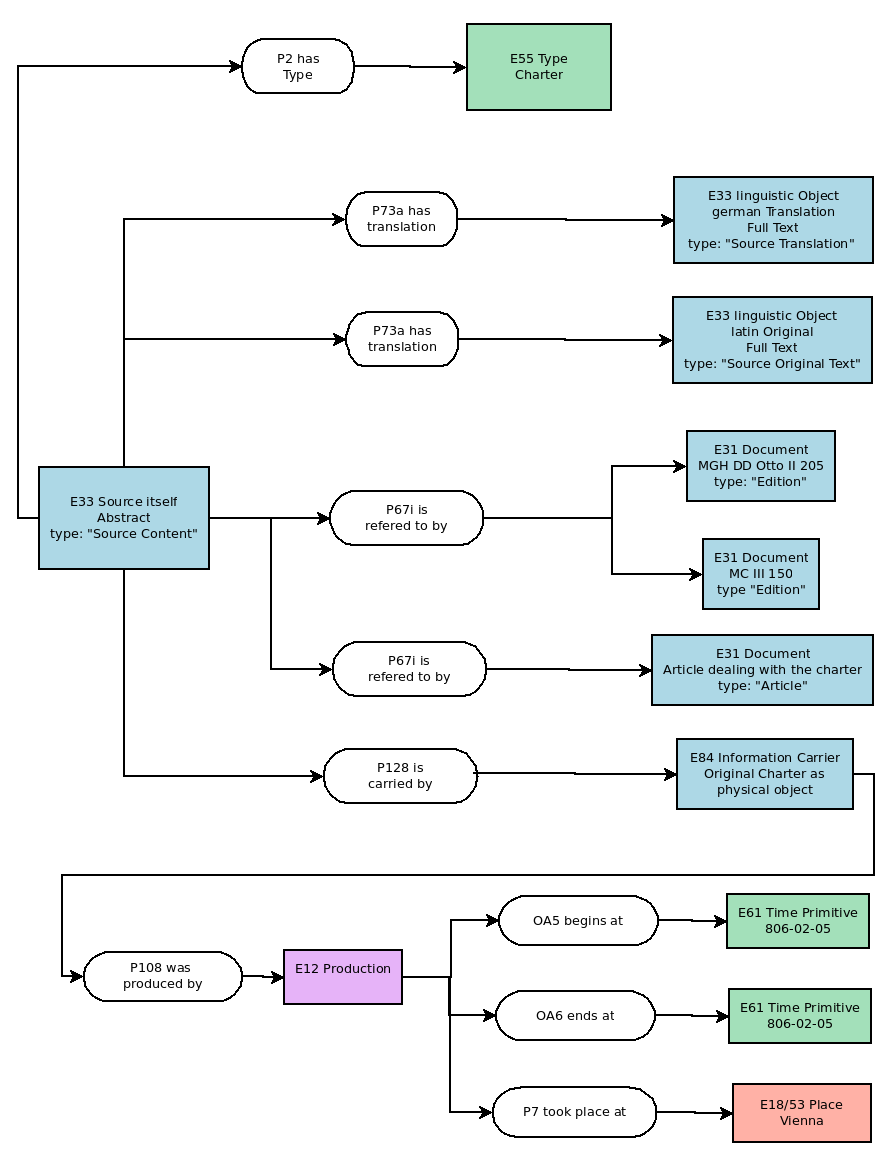

Written sources like for example medieval charters are mapped in OpenAtlas as a combination of several entities. The core is the content of the source that is defined as E33 (linguistic object).

This core usually contains a summary or the whole text of the source in a language understandable for the current users of the database. This core can be linked to translations respectively to the text in the original language (e.g. Latin) that is also stored as E33 (linguistic object).

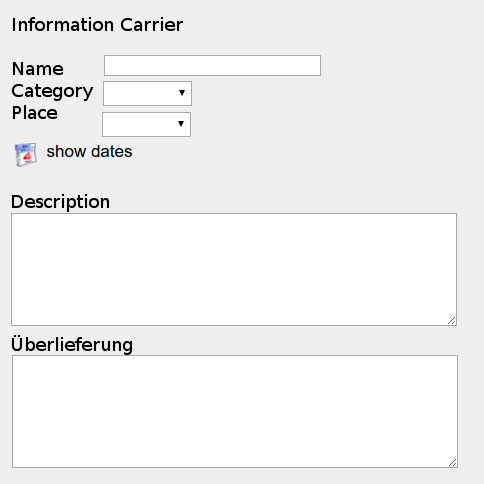

The core can also be linked to a physical object (E84 Information Carrier) like the original charter (e.g. a parchment manuscript) that carries the information described in the core.

The core content of course can also be documented in other documents (E31) like for example various editions of charters or also secondary source.

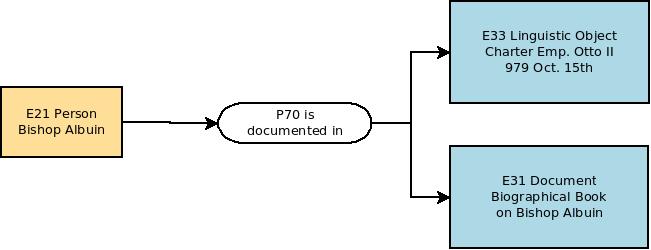

This core of the source, respectively the source's content delivers information on various other things like the events, persons and physical things mentioned in the text. These relations are recorded with a P70b (is documented in) link from the mentioned entity to the source.

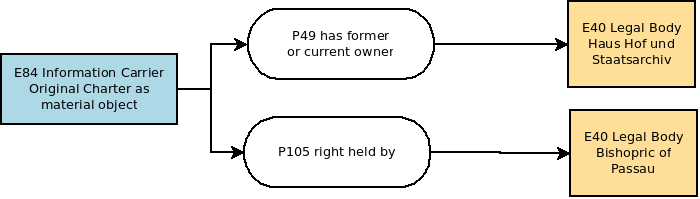

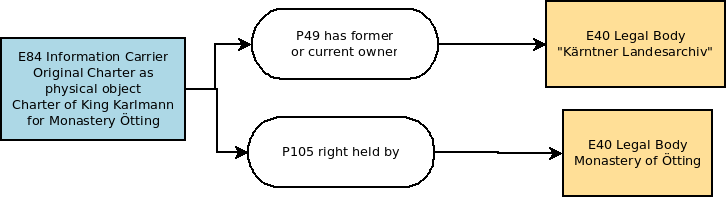

Information on the environment in which the source originally was generated or on the current or former owner is connected to the physical object/information carrier. This includes for example the legal body or the actor for whom a charter originally was signed or the archive where it is or was stored. Also time and place of creation, as well as the creator and the context of the creation can be stored.

The actor for whom the document was originally set up is recorded by a link P105 (right held by) from the information carrier (E84) to the actor.

The current or former owner of the document, e.g. the archive where a charter is currently kept, is recorded by a link P46 (has former or current owner) from the information carrier (E84) to the actor,

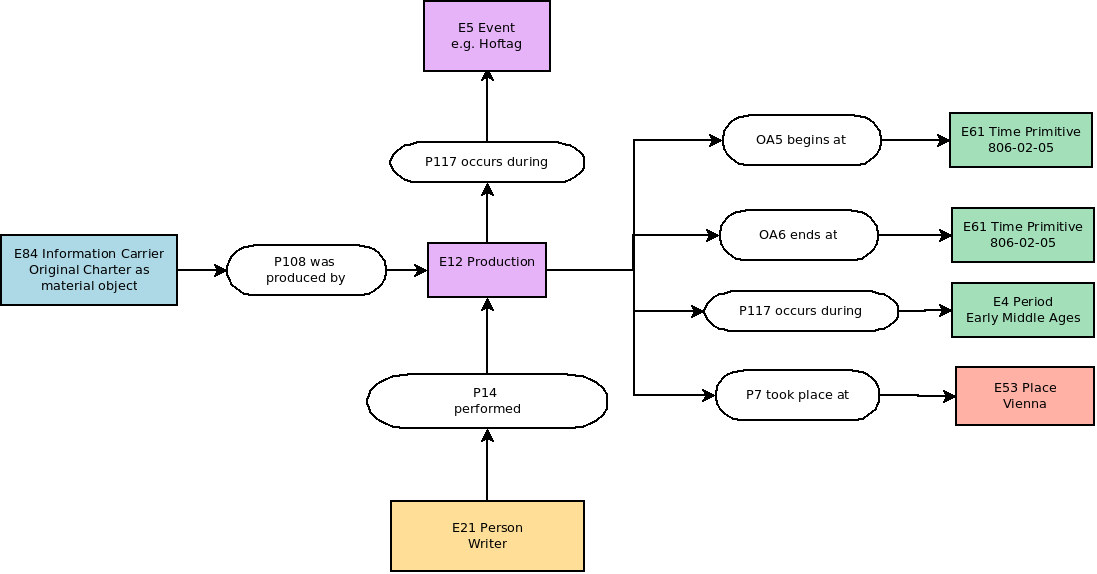

The production of the charter is recorded by an E12 (Production) event that is linked to the charter (E84 information carrier) with a P108 (was produced by) link.

This E12 Prodcution can be linked to places (P7 took place at) and to a certain point in time (OA5 and OA6 begins/ends at).

It can also be linked to other temporal entities, like a superior event during which the charter was produced (e.g. a Hoftag/Assembly) or to a certain chronological period/timespan via a P117 (occurs during) link.

The creator/writer (E39 Actor) of the charter is linked to the production event via a P104 (performs) property.

Within OpenAtlas single Persons (E21), Groups (E74) – like families – and legal bodies (E40) like for example the Holy Roman Empire are dealt with.

They can be linked to other entites like actors, events, documents, physical things, places, date and time etc.

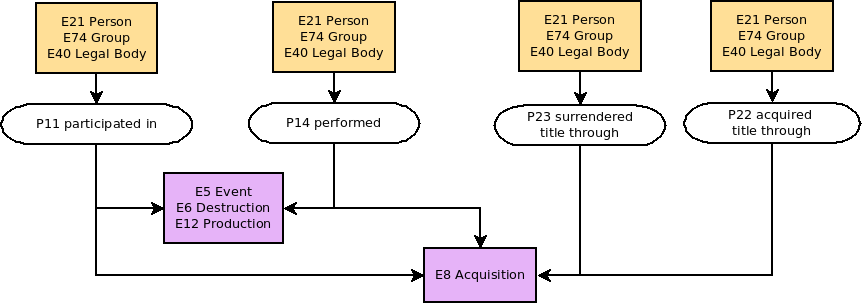

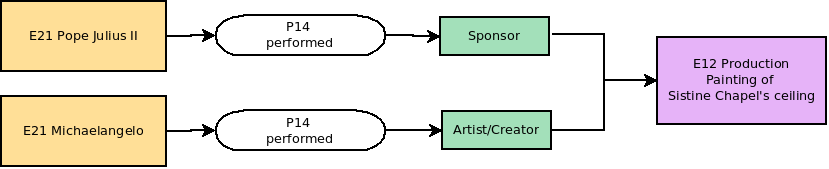

Actors can participate in Events either actively or passively. The first case is mapped with P14 performed, the latter with P11 participated in. In case of changes of property (i.e. if the event is a E8 Acquisition) P23 surrendered title through - for the giver - and P22 acquired title through -for the receiver - are used.

Also the actor's role can be documented by linking the property with a E55 type entity: For example to map an actor's role as sponsor and another one's role as artist/creator during the creation of a physical man made thing.

Actors (like any other entities) can be linked to E73 Information Objects like E31 Document e.g. an article on a historical person or E33 linguistic objects like the text of a medieval charter in which a person is mentionend.

Actors can be part of or have a certain role within a E74 group or a E40 legal body. The specification of this "membership" is recorded with a link to a E55 type

Actors can have direct relationships to other actors. Such relations are mapped with OA7 has relationship to. The type of relationship is specified with a link to E55 type.

Such relationships can be the same in both directions (E.g. Person A is friend of Person B and at the same time Person B is friend of Person A) or have an opposite meaning in the opposite direction (E.g. Person A is the father of person B while Person B is the son of Person A).

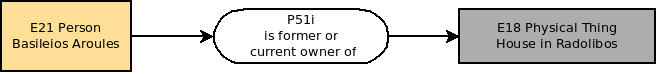

Actors can have direct relationships to Physical Things. In most cases this regards property which means that for example a person is the owner of a thing, like e.g. a manor. Such relations are mapped with P51i is former of current owner of.

Actors can have various direct relations to places. Next to them they may participate at an event that takes place a a certain location (see: Actors and Events

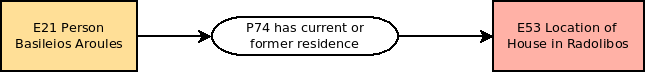

Actors can have a certain prefered place like a residence, a headquarter etc. E.g. Salzburg (E53 place) is the headquarter of the Salzburg Bishopric (E40 legal body) Such relations are mapped with P74 has current or former residence.

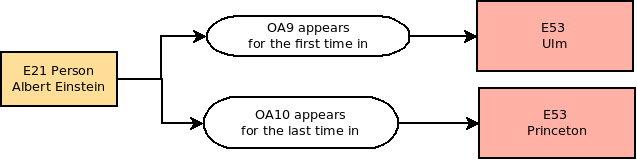

Actors can have a certain place in which they are born (or appear for the firs time) as well as a place of death (or a place where they appear for the last time). Such relations are mapped with OA8 appears for the first time in and OA9 appears for the last time in.

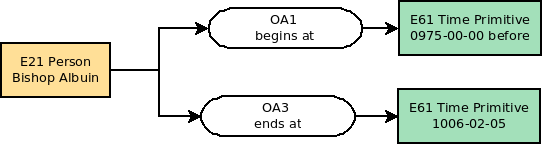

Actors can have a date at which they were born (or appear for the first time) or die (respectively appear for the last time). Such relations are mapped with OA1 begins chronologically and OA2 ends chronologically (to mark the timespan or date in/on which they appear for the first time) or OA3 born_chronologically and OA4 dies_chronologically (to mark a date on which they where born or died - if known). They link an actor with a time primitive like a timestamp.

In case this date is not known exactly, two time primitives can be recorded to mark a certain temporal span in which the birth or the first resp. death or last appearance took place. The first timestamp therefore is connected (p2 has type) with a type (E52) "from value", the second with a "to value" type (=Subtypes of "Numeric Value Types"). If one exact date is known this one gets the type "exact value".

These shortcuts are used in OpenAtlas to link various entities for certain purposes:

OA1 is used to link the beginning of a persistent item's (E77) life span (or time of usage) with a certain date in time.

E77 Persistent Item linked with a E61 Time Primitive:E77 (Persistent Item) - P92i (was brought into existence by) - E63 (Beginning of Existence) - P4 (has time span) - E52 (Time Span) - P81 (ongoing throughout) - E61 (Time Primitive)

Example: [Holy Lance (E22)] was brought into existence by [forging of Holy Lance (E12)] has time span [Moment/Duration of Forging of Holy Lance (E52)] ongoing througout [0770-12-24 (E61)]

OA2 is used to link the end of a persistent item's (E77) life span (or time of usage) with a certain date in time.

E77 Persistent Item linked with a E61 Time Primitive:E77 (Persistent Item) - P93i (was taken out of existence by) - E64 (End of Existence) - P4 (has time span) - E52 (Time Span) - P81 (ongoing throughout) - E61 (Time Primitive)

Example: [The one ring (E22)] was destroyed by [Destruction of the one ring (E12)] has time span [Moment of throwing it down the lava (E52)] ongoing througout [3019-03-25 (E61)]

OA3 is used to link the birth of a person with a certain date in time.

E21 Person's Birth linked with a E61 Time Primitive:E21 (Person) - P98i (was born) by - E67 (Birth) - P4 (has time span) - E52 (Time Span) - P81 (ongoing throughout) - E61 (Time Primitive)

Example: [Stefan (E21)] was born by [birth of Stefan (E12)] has time span [Moment/Duration of Stefan's birth (E52)] ongoing througout [1981-11-23 (E61)]

OA4 is used to link the death of a person with a certain date in time.

E21 Person's Death linked with a E61 Time Primitive:E21 (Person) - P100i (died in) - E69 (Death) - P4 (has time span) - E52 (Time Span) - P81 (ongoing throughout) - E61 (Time Primitive)

Example: [Lady Diana (E21)] died in [death of Diana (E69)] has time span [Moment/Duration of Diana's death (E52)] ongoing througout [1997-08-31 (E61)]

OA5 is used to link the beginning of a temporal entity (E2) with a certain date in time. It can also be used to determine the beginning of a property's duration.

E2 Temporal Entity linked with a E61 Time Primitive:E2 (Temporal Entity) - P4 (has time span) - E52 (Time Span) - P81 (ongoing throughout) - E61 (Time Primitive)

Example: [ Thirty Years' War (E7)] has time span [Moment/Duration of Beginning of Thirty Years' War (E52)] ongoing througout [1618-05-23 (E61)]

OA6 is used to link the end of a temporal entity's (E2) with a certain date in time. It can also be used to determine the end of a property's duration.

E2 temporal entity linked with a E61 Time Primitive:E2 (temporal entity) - P4 (has time span) - E52 (Time Span) - P81 (ongoing throughout) - E61 (Time Primitive)

Example: [ Thirty Years' War (E7)] has time span [Moment/Duration of End of Thirty Years' War (E52)] ongoing througout [1648-10-24 (E61)]

OA7 is used to link two Actors (E39) via a certain relationship

E39 Actor linked with E39 ActorE39 (Actor) - P11i (participated in) - E5 (Event) - P11 (had participant) - E39 (Actor)

Example: [ Stefan (E21)] participated in [ Relationship from Stefan to Joachim (E5)] had participant [Joachim (E21)]

The connecting event is defined by an entity of class E55 (Type):

[Relationship from Stefan to Joachim (E5)] has type [Son to Father (E55)]

OA8 is used to link the beginning of a persistent item's (E77) life span (or time of usage) with a certain place. E.g to document the birthplace of a person.

E77 Persistent Item linked with a E53 Place:E77 (Persistent Item) - P92i (was brought into existence by) - E63 (Beginning of Existence) - P7 (took place at) - E53 (Place)

Example: [Albert Einstein (E21)] was brought into existence by [Birth of Albert Einstein (E12)] took place at [Ulm (E53)]

OA9 is used to link the end of a persistent item's (E77) life span (or time of usage) with a certain place. E.g to document a person's place of death.

E77 Persistent Item linked with a E53 Place:E77 (Persistent Item) - P93i (was taken out of existence by) - E64 (End of Existence) - P7 (took place at) - E53 (Place)

Example: [Albert Einstein (E21)] was taken out of by [Death of Albert Einstein (E12)] took place at [Princeton (E53)]

E77 (Persistent Item) - P8 (witnessed) - E5 (its own Creation or Modification) - P117 (occurs during) - E4 (period)

Example: [Church of Notre Dame (E18)] belongs stylistically to [Gothic Period (E4)]

E77 (Persistent Item) - P93i (was taken out of existence by) - E64 (end of Existence) - P4 (has time span) - E52 (Time Span)

Example: [The one ring (E22)] was destroyed by [Destruction of the one ring (E12)] has time span [Late Third Age (E52)]

E77 (Persistent Item) - P92i (was brought into existence by) - E63 (Beginning of Existence) - P4 - E52 (Timespan)

Example: [Holy Lance (E22)] was brought into existence by [forging of Holy Lance (E12)] has time span [Carolingian Period (E52)]

In our model it is possible to link from E1 with P2 to E59

This draft is about best practice for development not already covered with the use of PEP 8, Pylint and Mypy.

Code review (Wikipedia) is an essential tool when developing quality software. We welcome interested persons and provide some general information about it here.

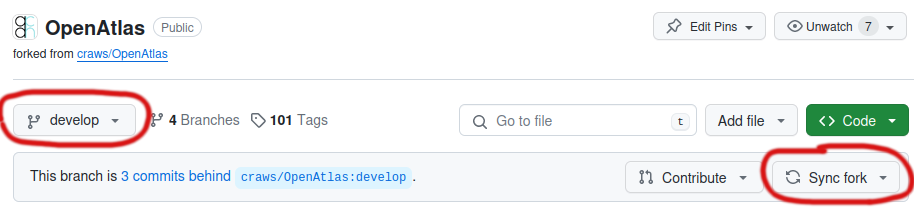

The code is available at GitHub and you'll find links to demo versions and manual, used technologies and much more on the OpenAtlas website.

Information about development is available here, in our Redmine Wiki.

We recommend using your favorite editor to analyze the code and install OpenAtlas locally to e.g. run tests.

A good read about working on code together is The Ten Commandments of Egoless Programming, as originally established in Jerry Weinberg's book.

These documents were written in 2015 and may be quite outdated. They are kept for historical reasons.

Mappings - How OpenAtlas maps its information within the CIDOC CRM (Examples)

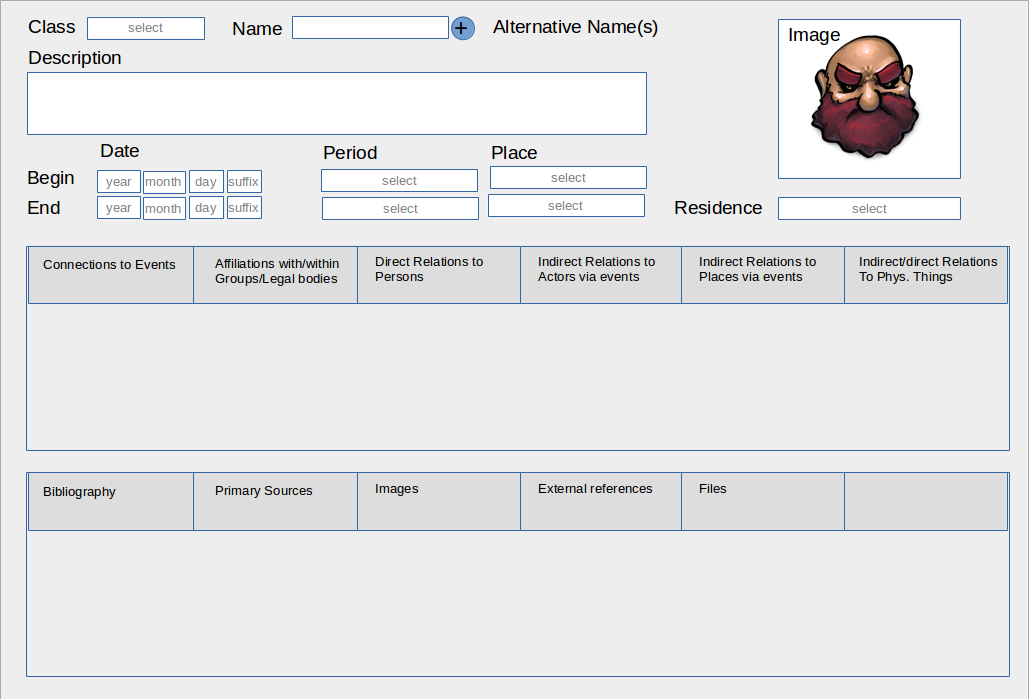

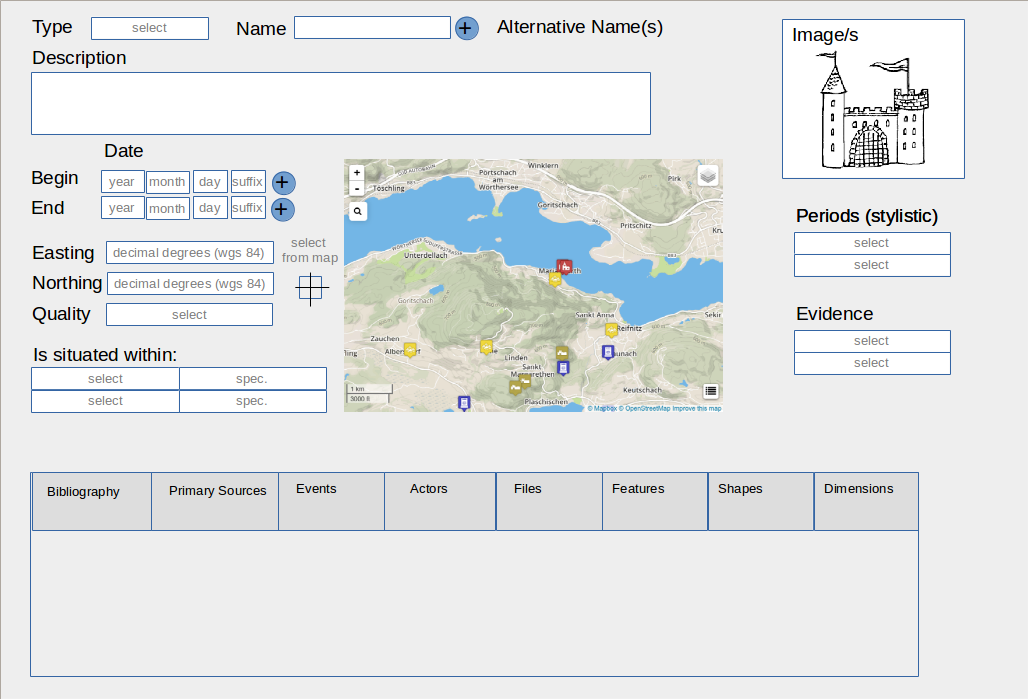

Graphical User Interface - Concept, Fields, Forms and Content of the GUI

Localisation - Spatial position of physical things

Except where otherwise noted, content on this site is licensed under the Creative Commons Attribution-ShareAlike 4.0 International License

CIDOC - http://www.cidoc-crm.org

CIDOC Eigenschaften - http://cidoc-crm.gnm.de/wiki/Eigenschaften

DPP Map Viewer (experimental): http://dev.geo.univie.ac.at/projects/dpp/#activetypes=¢er=41.226183305514596%2C22.851562500000004&selection=&selectioncategory=&time=300%2C1500&zoom=8

SELECT move.id, from_link.range_id, to_link.range_id FROM model.entity move

LEFT JOIN model.link from_link ON move.id = from_link.domain_id AND from_link.property_code = 'P27'

LEFT JOIN model.link to_link ON move.id = to_link.domain_id AND to_link.property_code = 'P26'

WHERE move.openatlas_class_name = 'move' AND from_link.range_id IS NULL AND to_link.range_id IS NOT NULL

ORDER BY move.id;

UPDATE model.entity SET class_code = 'E9', system_class = 'move' WHERE id IN ( SELECT id FROM model.entity WHERE id in (7511, 8215, 8420, 8422, 8770) AND class_code = 'E7');

UPDATE model.entity SET class_code = 'E9', system_class = 'move' WHERE id IN ( SELECT id FROM model.entity WHERE id in (1124, 1418, 950, 1409) AND class_code = 'E7');

Transform all events with type letter exchange to move event:

UPDATE model.entity SET class_code = 'E9' WHERE id IN (

SELECT e.id FROM model.entity e

JOIN model.link l ON e.id = l.domain_id AND l.range_id = 639 AND e.class_code = 'E7');

Update all move locations to start locations:

UPDATE model.link SET property_code = 'P27' WHERE id IN (

SELECT l.id FROM model.link l

JOIN model.entity e ON l.domain_id = e.id AND l.property_code = 'P7' AND e.class_code = 'E9');

Remove actors from move events and add them to source

BEGIN;

UPDATE model.entity SET class_code = 'E9' WHERE id IN (

SELECT e.id FROM model.entity e

JOIN model.link l ON e.id = l.domain_id AND l.range_id = 939 AND e.class_code = 'E7');

UPDATE model.link SET property_code = 'P27' WHERE id IN (

SELECT l.id FROM model.link l

JOIN model.entity e ON l.domain_id = e.id AND l.property_code = 'P7' AND e.class_code = 'E9');

INSERT INTO model.link (domain_id, range_id, property_code)

SELECT el.domain_id, l.range_id, 'P67' FROM model.link l

JOIN model.entity e ON l.domain_id = e.id AND l.type_id IN (862, 1091, 943, 1046, 1045)

JOIN model.link el ON e.id = el.range_id AND el.property_code = 'P67'

JOIN model.entity s ON el.domain_id = s.id AND s.class_code = 'E33';

DELETE FROM model.link WHERE id in (

SELECT l.id FROM model.link l

JOIN model.entity e ON l.domain_id = e.id AND l.type_id IN (862, 1091, 943, 1046, 1045)

JOIN model.link el ON e.id = el.range_id AND el.property_code = 'P67'

JOIN model.entity s ON el.domain_id = s.id AND s.class_code = 'E33'

);

COMMIT;

Remove description form move events and add it to source description.

BEGIN;

UPDATE model.entity s SET description = description || E'\r\n----\r\n' || (

SELECT e.description

FROM model.entity e

JOIN model.link l ON e.id = l.range_id AND l.property_code = 'P67' and e.class_code = 'E9' AND l.domain_id = s.id AND e.id NOT IN (1672, 1617, 1612, 1673, 1674, 1433, 1421, 1663, 1619, 1435, 1444, 1443, 1596, 1603, 826, 1496, 1493))

WHERE id IN (

SELECT l.domain_id

FROM model.entity e

JOIN model.link l ON e.id = l.range_id AND l.property_code = 'P67' and e.class_code = 'E9' AND e.id NOT IN (1672, 1617, 1612, 1673, 1674, 1433, 1421, 1663, 1619, 1435, 1444, 1443, 1596, 1603, 826, 1496, 1493));

UPDATE model.entity SET description = ''

WHERE id IN (

SELECT l.range_id

FROM model.entity e

JOIN model.link l ON e.id = l.range_id AND l.property_code = 'P67' and e.class_code = 'E9' AND e.id NOT IN (1672, 1617, 1612, 1673, 1674, 1433, 1421, 1663, 1619, 1435, 1444, 1443, 1596, 1603, 826, 1496, 1493));

COMMIT;

Join references

UPDATE model.link SET domain_id = 3204

WHERE property_code = 'P67' AND domain_id IN

(3216, 3218, 3222, 3230, 3234, 3238, 3285, 3287, 3705, 3713, 3715, 3719, 3745, 3815, 3845, 3849, 3853, 4272, 4276, 4281, 4286, 4293, 4297, 4301, 4367, 4369, 4374, 4494, 4731, 4735, 4742, 4926, 5130, 5132, 5137, 5141, 5148, 5149, 5154, 5158, 5232, 5236, 5240, 5245, 5264, 5269, 5273, 5277, 5281, 5286, 5290, 5294, 5295, 5299, 5303, 5307, 5311, 5315, 5319);

DELETE FROM model.entity WHERE id IN

(3216, 3218, 3222, 3230, 3234, 3238, 3285, 3287, 3705, 3713, 3715, 3719, 3745, 3815, 3845, 3849, 3853, 4272, 4276, 4281, 4286, 4293, 4297, 4301, 4367, 4369, 4374, 4494, 4731, 4735, 4742, 4926, 5130, 5132, 5137, 5141, 5148, 5149, 5154, 5158, 5232, 5236, 5240, 5245, 5264, 5269, 5273, 5277, 5281, 5286, 5290, 5294, 5295, 5299, 5303, 5307, 5311, 5315, 5319);

To allow Cross Origin Resource Sharing (CORS), OpenAtlas API uses flask-cors. Every path of the /api/ is protected, but the access can come from everywhere right now ('*')

cors = CORS(app, resources={r"/api/*": {"origins": app.config['CORS_ALLOWANCE']}})

Origins point to a global variable, which can be changed in the instance/production.py. Default value:

CORS_ALLOWANCE = '*'

The value can be a case-sensitive string, for a single point of origin, a regex expression, a list or an asterisk (*) as wildcard.

Examples:

CORS_ALLOWANCE = 'https://thanados.net/' CORS_ALLOWANCE = ['https://thanados.net/', 'https://openatlas.eu/'] CORS_ALLOWANCE = r'^((https?:\/\/)?.*?([\w\d-]*\.[\w\d]+))($|\/.*$)'

https://flask-cors.readthedocs.io/

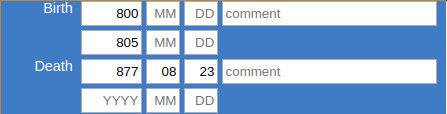

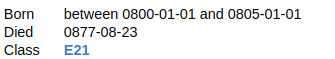

The OpenAtlas data model can store chronological information for certain entities and properties.

In general it can document a timespan for the begin and a timespan for the end of those. The smallest timespan unit possible is one day. There are no restrictions regarding the length of these time spans.

E.g. if you do not know the exact date of an actor's birth, but only that she/he was born at the earliest in 800AD and at the latest before 805AD, this timespan would range from Jan. the 1st, 800 to Jan. the 1st, 805. Given that person died on the 23rd of Aug. 877 you would only enter that day as and end-date. In the user interface this case would look like:

The system automatically creates the correct timespan given by the bounding dates provided by the user:

This way you can store chronological information as precise as possible or as fuzzy as necessary. Also it is possible to add a comment on the date e.g. "circa".

The same principle works for all of the following entities and properties:

Entities

Physical Things

Places (E18)

Features (E18)

Stratigraphic Units (E18)

Finds (E22)

Actors

Persons (E21)

Groups (E74)

Legal Bodies (E40)

Events

Activity (E7)

Acquisition (E8)

Production (E12)

Destruction (E6)Properties that link actors to Events

performed (P14)

participated in (P11)

acquired title through (P22)

surrendered title through (P23)

Properties that link actors to Actors

has relationship to (OA7)

is current or former member of (P107)The data is stored in the table model.entity, i.e. model.link in the fields begin_from, begin_to, begin_comment, end_from, end_to, end_comment as timestamps.

Depending on class of the entity, that is to say, the domain and range classes of the link, these dates can be mapped as time primitive (E61) entities within the CIDOC CRM.

The respective paths are the following:

E77 (Persistent Item) - P92i (was brought into existence by) - E63 (Beginning of Existence) - P4 (has time span) - E52 (Time Span) - P81 (ongoing throughout) - E61 (Time Primitive)

Example: [Holy Lance (E22)] was brought into existence by [forging of Holy Lance (E12)] has time span [Moment/Duration of Forging of Holy Lance (E52)] ongoing throughout [0770-12-24 (E61)]

E77 Persistent Item end linked with a E61 Time Primitive:E77 (Persistent Item) - P93i (was taken out of existence by) - E64 (End of Existence) - P4 (has time span) - E52 (Time Span) - P81 (ongoing throughout) - E61 (Time Primitive)

Example: [The one ring (E22)] was destroyed by [Destruction of the one ring (E12)] has time span [Moment of throwing it down the lava (E52)] ongoing throughout [3019-03-25 (E61)]

E21 Person's Birth linked with a E61 Time Primitive:E21 (Person) - P98i (was born) by - E67 (Birth) - P4 (has time span) - E52 (Time Span) - P81 (ongoing throughout) - E61 (Time Primitive)

Example: [Stefan (E21)] was born by [birth of Stefan (E12)] has time span [Moment/Duration of Stefan's birth (E52)] ongoing throughout [1981-11-23 (E61)]

E21 Person's Death linked with a E61 Time Primitive:E21 (Person) - P100i (died in) - E69 (Death) - P4 (has time span) - E52 (Time Span) - P81 (ongoing throughout) - E61 (Time Primitive)

Example: [Lady Diana (E21)] died in [death of Diana (E69)] has time span [Moment/Duration of Diana's death (E52)] ongoing throughout [1997-08-31 (E61)]

E2 Temporal Entity (also property) begin linked with a E61 Time Primitive:E2 (Temporal Entity) - P4 (has time span) - E52 (Time Span) - P81 (ongoing throughout) - E61 (Time Primitive)

Example: [Thirty Years' War (E7)] has time span [Moment/Duration of Beginning of Thirty Years' War (E52)] ongoing throughout [1618-05-23 (E61)]

E2 temporal entity (also property) end linked with a E61 Time Primitive:E2 (temporal entity) - P4 (has time span) - E52 (Time Span) - P81 (ongoing throughout) - E61 (Time Primitive)

Example: [Thirty Years' War (E7)] has time span [Moment/Duration of End of Thirty Years' War (E52)] ongoing throughout [1648-10-24 (E61)]

These are advanced installation notes for a Debian server to deploy OpenAtlas.

We use this instruction for our workflow, it is very specific and detailed (e.g. changing the prompt to color and show git information) so feel free to use/adapt as needed.

OpenAtlas user interfaces are currently supported for English and German, you might want to install needed languages with this command:

dpkg-reconfigure locales

PermitRootLogin no PasswordAuthentication no

# apt-get install unattended-upgrades apt-listchanges

Unattended-Upgrade::Mail "root";

rkunter is a Unix-based tool that scans for rootkits, backdoors and possible local exploits. To install rkhunter and prevent false positives when deploying OpenAtlas follow the instructions below.

Installation

apt install rkhunter

vim /etc/rkhunter.conf

vim /etc/rkhunter.conf.local

rkhunter -c --sk

rkhunter --propupd

vim /etc/default/rkhunter

apt install apache2 (needed for permissions and file structure)

groupadd web-admin usermod -a -G web-admin alex chgrp -R web-admin /var/www chmod -R 775 /var/www chmod g+s /var/www

vim ~/.profile

umask 002

mkdir /var/www/openatlas mkdir /var/www/frontend

vim ~/.bashrc

function parse_git_dirty {

[[ $(git status 2> /dev/null | tail -n1) != "nothing to commit, working tree clean" ]] && echo "*"

}

function parse_git_branch {

git branch --no-color 2> /dev/null | sed -e '/^[^*]/d' -e "s/* \(.*\)/[\1$(parse_git_dirty)]/"

}

PS1='\[\e[1;34m\]\u@\h:\w\[\e[0;32m\]$(parse_git_branch)\[\e[1;34m\]\$ \[\e[m\]'

Change default editor to vim:

update-alternatives --config editor

Next follow the instructions how to install OpenAtlas: https://github.com/craws/OpenAtlas/blob/main/install.md

For git e.g. ACDH-CH:

$ git config --global http.proxy http://fifi.arz.oeaw.ac.at:8080

$ pip3 install --proxy=http://fifi.arz.oeaw.ac.at:8080 calmjs $ npm config set proxy http://fifi.arz.oeaw.ac.at:8080

# a2dismod autoindex # service apache2 restart

sudo apt install brotli

sudo a2enmod brotli

<IfModule mod_brotli.c>

AddOutputFilterByType BROTLI_COMPRESS text/html text/plain text/xml text/css text/javascript application/javascript application/json application/xml

BrotliCompressionQuality 4

</IfModule>

sudo service apache2 restart

(For sites on ACDH-CH servers ignore this, the certificate has to be managed by the proxy server.)

# apt install certbot python3-certbot-apache # certbot --apache # certbot

After configuration of certbot, uncomment the line with WSGIDaemonProcess in /etc/apache2/sites-available/XXX.conf before creating certificates for OpenAtlas instances.

System mails (e.g. from cron jobs) are implemented with msmtp

# apt install msmtp msmtp-mta ca-certificates # vim /etc/msmtprc # vim /etc/aliases # msmtp root (to test, write some lines to not get flagged as spam and than CTRL + D)

SQL statements used for producing demo data

Todo: Adapt like demo-dev SQL

BEGIN;

-- Disable triggers, otherwise script takes forever and/or run into errors

ALTER TABLE model.entity DISABLE TRIGGER on_delete_entity;

ALTER TABLE model.link_property DISABLE TRIGGER on_delete_link_property;

-- Delete data from other users than Sonja and Petra

DELETE FROM model.entity WHERE id IN (

SELECT entity_id FROM web.user_log

WHERE action = 'insert'

AND class_code IN ('E33', 'E6', 'E7', 'E8', 'E12', 'E21', 'E40', 'E74', 'E18', 'E31', 'E84')

AND user_id NOT IN (21, 16));

-- Delete unrelated user

DELETE FROM web.user WHERE username NOT IN ('Alex', 'Demolina', 'jpreiser', 'pheinicker', 'sduenneb');

-- Insert demo user

INSERT INTO web.user (username, real_name, email, active, group_id, password) VALUES (

'Demolina', 'Demolina', 'demolina@example.com', True, (SELECT id FROM web.group WHERE name = 'editor'),

'$2b$12$9T05T1IiCnlEiUdf5gSosuSYewK5Rf4T/PwuvbSXEooR95BG2kgvG');

-- Disable email, set sitename and other settings

UPDATE web.settings SET value = '' WHERE name = 'mail';

UPDATE web.settings SET value = '' WHERE name LIKE 'mail_%';

UPDATE web.settings SET value = 'openatlas@craws.net' WHERE name LIKE 'mail_recipients_feedback';

UPDATE web.settings SET value = '1' WHERE name = 'file_upload_max_size';

UPDATE web.settings SET value = 'Demo' WHERE name = 'site_name';

-- Update content

UPDATE web.i18n SET text = '<p>Demo site for <a href="http://openatlas.eu/">OpenAtlas</a> projects. <a href="/login">Login</a>.</p>

<p>The data will be reset daily around midnight. Demo data kindly provided by:</p>

<p><strong>Mapping Medieval Conflicts (MEDCON). A digital approach towards political dynamics in the pre-modern period</strong><br /><br />MEDCON was funded within the go!digital-programme of the Austrian Academy of Sciences (OEAW) from October 2014 to May 2017 and hosted at the Institute for Medieval Research of OEAW The project headed by Johannes Preiser-Kapeller examined the explanatory power of concepts of social and spatial network analysis for phenomena of political conflict in medieval societies.<br /><br />The data presented in this demo version stems from two of MEDCON´s case studies, “Emperor Frederick III and the League of the Mailberger coalition in 1451/52” (executed by Kornelia Holzner-Tobisch and Petra Heinicker) and “Factions and alliances in the fight of Maximilian I for Burgundy” (Sonja Dünnebeil).<br /><br />For further information on the project see: <a href="http://oeaw.academia.edu/MappingMedievalConflict" target="_blank" rel="noopener noreferrer">Mapping Medieval Conflict</a> or contact Johannes.Preiser-Kapeller@oeaw.ac.at.</p>

<p><strong>OpenAtlas</strong></p>' WHERE name = 'intro' AND language = 'en';

UPDATE web.i18n SET text = '<p style="text-align: left;">Demo Seite für <a href="http://openatlas.eu/">OpenAtlas</a> Projekte. Zum <a href="/login">Login</a>.</p>

<p>Die Daten werden täglich gegen Mitternacht zurückgesetzt. Demo Daten freundlicherweise zur Verfügung gestellt von:</p>

<p><strong>Mapping Medieval Conflicts (MEDCON). A digital approach towards political dynamics in the pre-modern period</strong><br /><br />MEDCON wurde durch das go!digital-Programm der Österreichischen Akademie der Wissenschaften (ÖAW) finanziert und vom Oktober 2014 bis zum Mai 2017 am Institut für Mittelalterforschung der ÖAW durchgeführt. Das Projekt untersuchte unter der Leitung von Johannes Preiser-Kapeller die Erklärungskraft von Konzepten der sozialen und geographischen Netzwerkanalyse für Phänomene des politischen Konflikts in mittelalterlichen Gesellschaften.<br /><br />Die Daten, die in dieser Demo-Version präsentiert werden, stammen aus zwei Fallstudien von MEDCON, „Kaiser Friedrich III. und die Liga der Mailberger Koalition, 1451/52“ (durchgeführt durch Kornelia Holzner-Tobisch und Petra Heinicker) und „Fraktionen und Allianzen im Kampf von Maximilian I. um Burgund“ (Sonja Dünnebeil).<br /><br />Weitere Informationen zum Projekt finden Sie hier: <a href="http://oeaw.academia.edu/MappingMedievalConflict" target="_blank" rel="noopener noreferrer">Mapping Medieval Conflict</a> (bzw. Kontakt: Johannes.Preiser-Kapeller@oeaw.ac.at).</p>

<p><strong>OpenAtlas</strong></p>' WHERE name = 'intro' AND language = 'de';

UPDATE web.i18n SET text = 'Webmaster: alexander.watzinger@craws.net' WHERE name = 'contact' AND language = 'en';

UPDATE web.i18n SET text = 'Webmaster: alexander.watzinger@craws.net' WHERE name = 'contact' AND language = 'de';

UPDATE web.i18n SET text = '' WHERE name = 'legal_notice' AND language = 'en';

UPDATE web.i18n SET text = '' WHERE name = 'legal_notice' AND language = 'de';

-- Delete orphans manually because triggers are disabled

DELETE FROM model.entity WHERE id IN (

SELECT e.id FROM model.entity e

LEFT JOIN model.link l1 on e.id = l1.domain_id

LEFT JOIN model.link l2 on e.id = l2.range_id

LEFT JOIN model.link_property lp2 on e.id = lp2.range_id

WHERE

l1.domain_id IS NULL

AND l2.range_id IS NULL

AND lp2.range_id IS NULL

AND e.class_code IN ('E61', 'E41', 'E53', 'E82'));

-- Delete orphaned translations

DELETE FROM model.entity WHERE system_type = 'source translation' AND id NOT IN (SELECT range_id FROM model.link WHERE property_code = 'P73');

-- Re-enable triggers

ALTER TABLE model.entity ENABLE TRIGGER on_delete_entity;

ALTER TABLE model.link_property ENABLE TRIGGER on_delete_link_property;

COMMIT;

After executing test for orphaned locations and delete them with SQLs above.

-- SQL to filter demo data from DPP

BEGIN;

-- Disable triggers, otherwise script takes forever and/or runs into errors

ALTER TABLE model.entity DISABLE TRIGGER on_delete_entity;

-- Delete data from other case studies

DELETE FROM model.entity WHERE id NOT IN

(SELECT e.id FROM model.entity e

JOIN model.link l ON

e.id = l.domain_id

AND l.property_code = 'P2'

AND l.range_id IN (SELECT id FROM model.entity WHERE name IN ('Ethnonym of the Vlachs')))

AND class_code IN ('E33', 'E6', 'E7', 'E8', 'E12', 'E21', 'E74', 'E40', 'E31', 'E18', 'E84', 'E22')

AND (system_type IS NULL OR system_type NOT IN ('source translation'));

-- Delete orphans manually because triggers are disabled

DELETE FROM model.entity WHERE id IN (

SELECT e.id FROM model.entity e

LEFT JOIN model.link l1 on e.id = l1.domain_id

LEFT JOIN model.link l2 on e.id = l2.range_id

WHERE

l1.domain_id IS NULL

AND l2.range_id IS NULL

AND e.class_code IN ('E61', 'E41', 'E53', 'E82'));

-- Delete orphaned translations

DELETE FROM model.entity WHERE system_type = 'source translation' AND id NOT IN (SELECT range_id FROM model.link WHERE property_code = 'P73');

-- Delete unrelated user

DELETE FROM web.user WHERE username NOT IN ('Alex', 'dschmid', 'bkoschicek', 'mpopovic', 'jnikic');

-- Insert demo user

INSERT INTO web.user (username, real_name, email, active, group_id, password) VALUES (

'Demolina', 'Demolina', 'demolina@example.com', True, (SELECT id FROM web.group WHERE name = 'editor'),

'$2b$12$9T05T1IiCnlEiUdf5gSosuSYewK5Rf4T/PwuvbSXEooR95BG2kgvG');

-- Disable email, set sitename and other settings

UPDATE web.settings SET value = '' WHERE name = 'mail';

UPDATE web.settings SET value = '' WHERE name LIKE 'mail_%';

UPDATE web.settings SET value = 'openatlas@craws.net' WHERE name LIKE 'mail_recipients_feedback';

UPDATE web.settings SET value = '1' WHERE name = 'file_upload_max_size';

UPDATE web.settings SET value = 'Development Demo' WHERE name = 'site_name';

UPDATE web.settings SET value = 'Development Demo' WHERE name = 'site_header';

-- Update content

UPDATE web.i18n SET text = '<p>Development Demo site for <a href="http://openatlas.eu/">OpenAtlas</a> projects. <a href="/login">Login</a>.</p>

<p>The data will be reset daily around midnight. Demo data kindly provided by:</p>

<p><strong>The Ethnonym of the Vlachs in the Written Sources and the Toponymy in the Historical Region of Macedonia</strong> (11th-16th Cent.) <a href="http://dpp.oeaw.ac.at/index.php?seite=CaseStudies&submenu=skopje" target="_blank" rel="noopener noreferrer">More Information</a></p>

<p>The present demo version is the result of a scholarly project, which was submitted by the digital cluster project “Digitising Patterns of Power (<a href="http://dpp.oeaw.ac.at/" target="_blank" rel="noopener noreferrer">DPP</a>)” at the Institute for Medieval Research (Austrian Academy of Sciences, Vienna) and the Ss. Cyril and Methodius University of Skopje (Faculty of Philosophy, Institute for History). It focuses on the interplay between the resident population and the nomads (i.e. the Vlachs) in the historical region of Macedonia from the 11th to the 16th centuries.<br /><br />This region at the crossroads of Orthodoxy, Roman Catholicism and Islam and the question of the origin of the Vlachs, who identify themselves as a separate ethnic group until modern times, as well as the ethnonym "Vlachs" and its derivatives in the form of toponyms and personal names are at the core of the joint research. Hereby, historical and archaeological research is combined with Digital Humanities.<br /><br />The project, which was successfully submitted by the project coordinators Doz. Dr. Mihailo Popović and Prof. Dr. Toni Filiposki, is funded by the Centre for International Cooperation & Mobility (ICM) of the Austrian Agency for International Cooperation in Education and Research (OeAD-GmbH) for two years (2016-18) and forms an additional case study within DPP. <br /><br />Project teams:<br /><br />Toni Filiposki (project leader / Skopje), Boban Petrovski (Skopje), Nikola Minov (Skopje), Vladimir Kuhar (Skopje), Boban Gjorgjievski (Skopje)<br /><br />Mihailo Popović (project leader / Vienna), Jelena Nikić (Vienna), David Schmid (Vienna)</p>

<p><strong>OpenAtlas</strong></p>' WHERE name = 'intro' AND language = 'en';

UPDATE web.i18n SET text = '<p style="text-align: left;">Development Demo Seite für <a href="http://openatlas.eu/">OpenAtlas</a> Projekte. Zum <a href="/login">Login</a>.</p>

<p>Die Daten werden täglich gegen Mitternacht zurückgesetzt. Demo Daten freundlicherweise zur Verfügung gestellt von:</p>

<p><strong>The Ethnonym of the Vlachs in the Written Sources and the Toponymy in the Historical Region of Macedonia</strong> (11th-16th Cent.) <a href="http://dpp.oeaw.ac.at/index.php?seite=CaseStudies&submenu=skopje" target="_blank" rel="noopener noreferrer">More Information</a></p>

<p>The present demo version is the result of a scholarly project, which was submitted by the digital cluster project “Digitising Patterns of Power (<a href="http://dpp.oeaw.ac.at/" target="_blank" rel="noopener noreferrer">DPP</a>)” at the Institute for Medieval Research (Austrian Academy of Sciences, Vienna) and the Ss. Cyril and Methodius University of Skopje (Faculty of Philosophy, Institute for History). It focuses on the interplay between the resident population and the nomads (i.e. the Vlachs) in the historical region of Macedonia from the 11th to the 16th centuries.<br /><br />This region at the crossroads of Orthodoxy, Roman Catholicism and Islam and the question of the origin of the Vlachs, who identify themselves as a separate ethnic group until modern times, as well as the ethnonym "Vlachs" and its derivatives in the form of toponyms and personal names are at the core of the joint research. Hereby, historical and archaeological research is combined with Digital Humanities.<br /><br />The project, which was successfully submitted by the project coordinators Doz. Dr. Mihailo Popović and Prof. Dr. Toni Filiposki, is funded by the Centre for International Cooperation & Mobility (ICM) of the Austrian Agency for International Cooperation in Education and Research (OeAD-GmbH) for two years (2016-18) and forms an additional case study within DPP. <br /><br />Project teams:<br /><br />Toni Filiposki (project leader / Skopje), Boban Petrovski (Skopje), Nikola Minov (Skopje), Vladimir Kuhar (Skopje), Boban Gjorgjievski (Skopje)<br /><br />Mihailo Popović (project leader / Vienna), Jelena Nikić (Vienna), David Schmid (Vienna)</p>

<p> <strong>OpenAtlas</strong></p>' WHERE name = 'intro' AND language = 'de';